The 2026 AI Compute Crunch: Why Exploding Token Consumption Is Forcing AWS, Google Cloud, and Others to Raise Prices

2026 AI Compute Crunch: Token Surge Drives Price Hikes

In early 2026, the AI industry hit a turning point that many predicted but few prepared for: compute supply can no longer keep up with demand.

Token consumption—the fundamental unit measuring how much AI models are actually being used—has exploded. This surge is now directly driving up the cost of renting compute power. In January 2026, AWS quietly raised prices on its EC2 Capacity Blocks for Machine Learning by ~15%. Google Cloud followed with announcements of increases of up to 100% on key networking services effective May 1, 2026. Chinese cloud providers are now openly evaluating similar hikes.

If you're running inference at scale, training models, or simply renting GPUs for AI workloads, your cloud bill is about to feel the pain. Here's the full story — plus the emerging solutions that smart builders are already switching to.

The Token Consumption Explosion: From Millions to Billions Per Day

Just three years ago, a heavy AI user might burn through 5,000–10,000 tokens per day. Today, power users with agentic workflows routinely consume millions of tokens daily — a 50x increase.

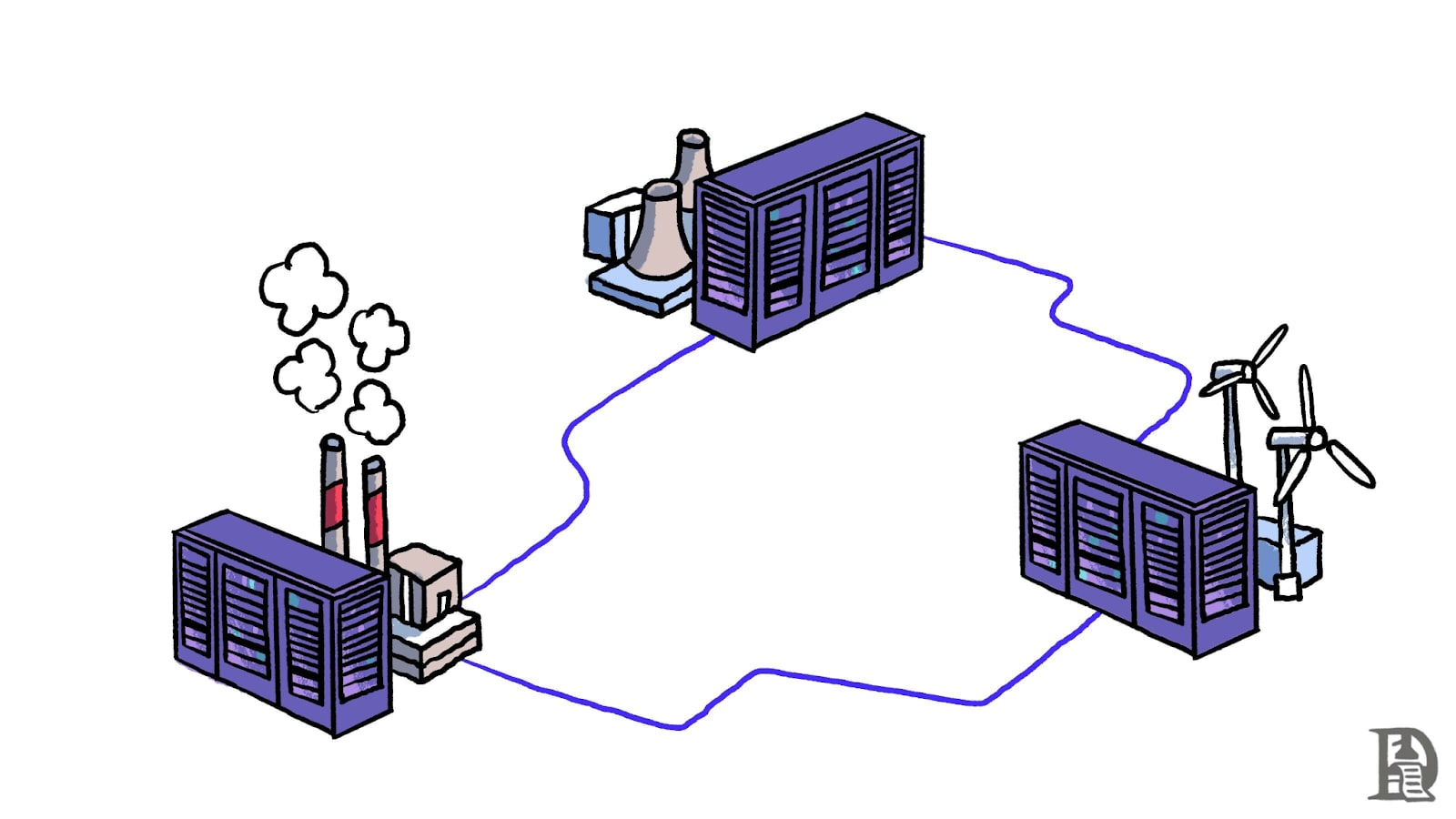

The drivers are clear: smarter models, autonomous agents, and inference now dominating ~two-thirds of all AI compute demand. Global active LLM users have reached ~1 billion. Every extra token burns real GPU cycles, memory, and power. The result? A classic supply-demand crunch in the AI compute rental market.

AWS Raises EC2 Capacity Blocks for ML by ~15% (January 2026)

On or around January 4–5, 2026, AWS hiked:

- p5e.48xlarge: $34.61 → $39.80 per hour (~15%)

- Similar jumps on p5en instances

Google Cloud’s May 1, 2026 increases on CDN Interconnect and peering services (up to 100% in some regions) add further pain for data-heavy AI workloads.

Why Now? The Perfect Storm

Global HBM and DRAM shortages, power constraints (AI data centers projected to consume >500 TWh in 2026), and capex that simply can’t scale fast enough have created the crunch. Spot GPU prices briefly softened in late 2025, but reserved, guaranteed capacity is tightening again.

Emerging Alternatives: AICC’s Unified API + Decentralized Compute Market

While hyperscalers raise prices, one platform is quietly becoming the go-to escape hatch for cost-conscious teams: AICC (AI.cc).

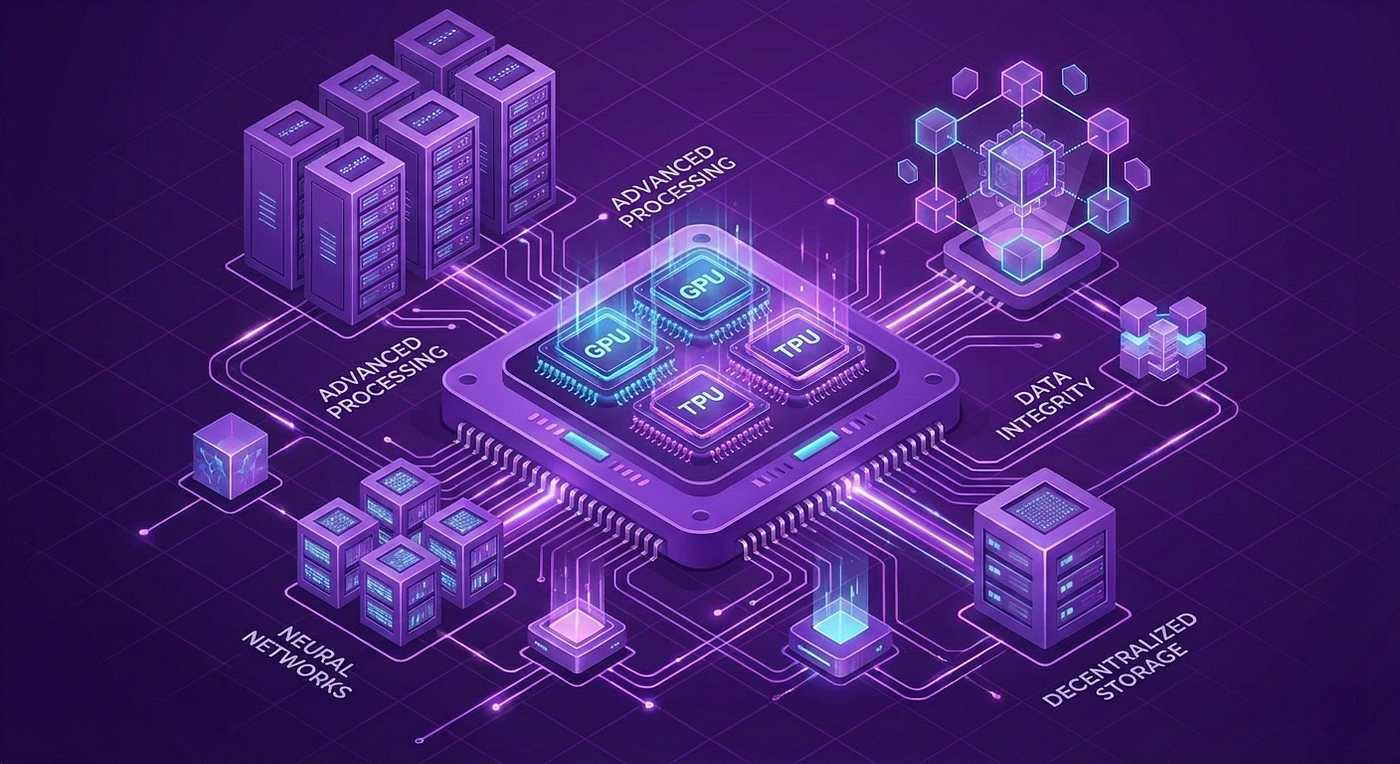

AICC has evolved from a simple domain into a full-stack AI ecosystem that directly addresses the exact pain points of the 2026 compute crunch:

1. One API — 300+ Models, 20–80% Lower Cost

Change your base URL to https://api.ai.ccand keep the exact same OpenAI-compatible format. Instantly access 300+ frontier models (GPT-5.2, Claude 4.5 Opus, Gemini 3, DeepSeek, ByteDance, Meta, and dozens more).

Because AICC aggregates demand across a massive global user base and runs on high-performance serverless architecture, it delivers 20–80% savings versus calling the original providers directly.

2. AICCTOKEN — Decentralized Compute (DePIN) That Actually Works

To solve the root cause — sky-high, centralized GPU costs controlled by AWS/Google — AICC launched the AICCTOKEN project.

- On-demand rental without expensive long-term contracts

- Significantly lower costs than hyperscaler reserved instances

- Anti-censorship & high availability — no single point of failure

In a market where token consumption is exploding and centralized providers are raising prices, AICC’s combination of unified cheap inference + decentralized GPU marketplace is becoming the strategic hedge every serious AI builder needs.

What This Means for AI Builders and Enterprises in 2026

Your cloud bills are going up 10–25%+ unless you act. But teams already migrating portions of their workloads to AICC are reporting immediate relief: Lower OpEx through aggregation savings, guaranteed capacity via DePIN, and future-proof architecture.

How to Fight Back: Practical Cost Optimization Strategies

Prompt caching, smaller models for routing, hard token budgets.

Keep critical production on hyperscalers, but route 30–70% of inference through AICC’s One API for instant 20–80% savings.

Mix On-Demand + Spot + Reserved + AICC DePIN. Monitor with cross-platform tools.

Negotiate enterprise deals early and evaluate AICC’s 7.3T-token high-quality corpus if you’re training your own models.

The Road Ahead

The compute crunch is real and will intensify through 2027. The era of "cloud prices only go down" is over for AI workloads. Token consumption is the new oil.

But the winners won’t be the ones who simply pay more to AWS and Google — they’ll be the ones who intelligently combine hyperscaler reliability with platforms like AICC.

Bottom line: Treat compute cost as a strategic variable. Start routing traffic to AICC’s One API this week.

Stay ahead of the crunch. Optimize early — and diversify smart.

Log in

Log in