💸 Overpaying for Tokens

High-volume tasks like customer support or content generation burn through token budgets at alarming speed when using premium-tier models without cost controls.

Discover how small and medium-sized enterprises can integrate powerful AI tools — chatbots, content automation, data analysis — while cutting API costs by 20–80% using smarter, aggregated alternatives to OpenAI and Anthropic Claude.

As a small or medium-sized enterprise navigating the AI boom in 2026, integrating artificial intelligence can feel like a double-edged sword. Tools like chatbots, content automation, and data analysis promise efficiency gains — yet skyrocketing costs from premium providers like OpenAI and Claude can quickly erode your margins. With global AI infrastructure investments exceeding $650 billion, many SMEs are urgently seeking affordable AI alternatives to stay competitive without overspending.

This guide is designed to help you sidestep those high-price traps — exploring practical strategies backed by real-world data, and introducing platforms like AICC (AI.cc), a unified, low-cost API gateway to 300+ models that makes high-performance AI accessible without the premium markup.

In 2026, the AI landscape is dominated by a few heavyweights — but their pricing models rarely align with SME realities. OpenAI's GPT series and Anthropic's Claude charge premium rates, with advanced variants reaching $25 per million output tokens, resulting in monthly bills of thousands for moderate usage. Add in potential access restrictions, and single-provider reliance becomes a genuine risk.

High-volume tasks like customer support or content generation burn through token budgets at alarming speed when using premium-tier models without cost controls.

Switching AI models means rewriting integration code and managing multiple API keys — wasting developer time and creating fragile, inflexible infrastructure.

The global AI data center surge means hidden power and compute costs trickle down to end users, inflating bills beyond the published per-token rates.

Aggregated platforms address these issues by pooling resources and negotiating bulk deals. AICC's "One API" philosophyprovides seamless access to 300+ models — including GPT-5.2, Claude 4.5 Opus, Google Gemini 3, and more — at costs 20–80% lower than going direct, while eliminating single-supplier dependency.

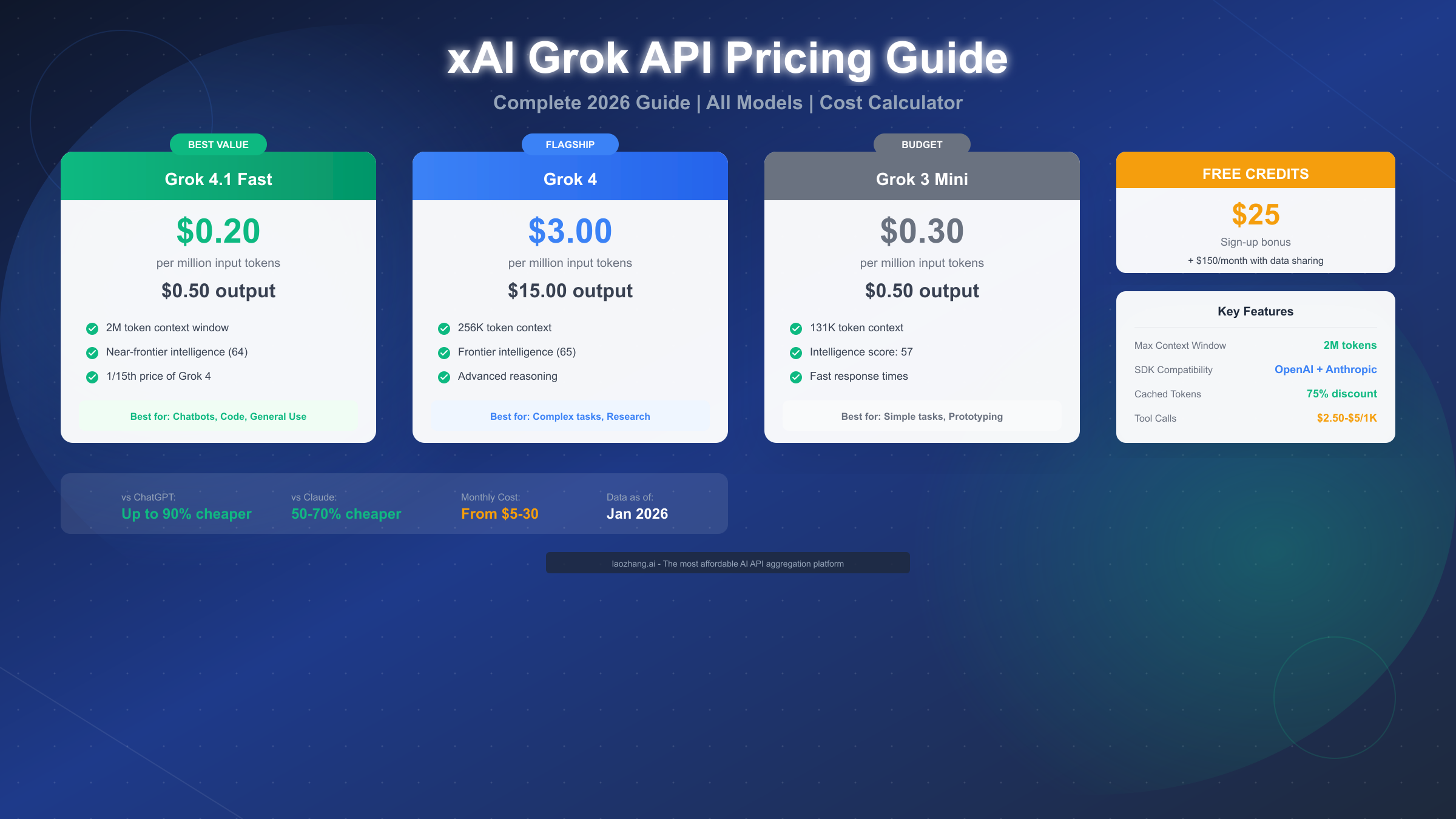

Here's a detailed comparison based on 2026 pricing data — focusing on per-million-token rates, context windows, and SME-friendly features. Note how affordable alternatives stack up favorably against the incumbents.

| Provider / Model | Input (per 1M Tokens) | Output (per 1M Tokens) | Context Window | Best For SMEs | Cost Savings Potential |

|---|---|---|---|---|---|

| OpenAI GPT-5.2 | $2.50 – $5.00 | $10.00 – $15.00 | 1M+ | General reasoning, multimodal | Baseline — good but expensive for scaling. |

| Anthropic Claude 4.5 Opus | $5.00 | $25.00 | 1M | Advanced coding, ethics-aligned tasks | High-end; up to $100/day for intensive use — trap for budget SMEs. |

| Google Gemini 3 | $0.50 – $1.00 | $1.50 – $3.00 | Up to 2M | High-throughput apps | 70–80% cheaper than Claude; solid alternative. |

| AICC (Aggregated Gateway) | $0.20 – $1.00 (avg) | $0.50 – $5.00 (avg) | Varies (up to 2M) | Multi-model integration, agents | 20–80% savings vs. premiums; unlimited TPM/RPM for high-frequency needs. |

| DeepSeek (via AICC) | $0.07 – $0.63 | $0.07 – $0.63 | Up to 2M | Open-source, custom training | Near-zero costs post-setup; ideal for SMEs via AICC's unified access. |

AICC's serverless architecture ensures infinite scalability with ultra-low latency, while its bulk procurement model delivers deep discounts. For an SME running daily AI agents, switching could mean reallocating thousands of dollars back into core operations.

Follow this streamlined process tailored for SMEs with limited resources — practical, actionable, and built to avoid the common pitfalls.

Consider "LA Urban Essentials," a mid-sized ecommerce firm in Los Angeles using AI for product descriptions and chat support. Initially tied to OpenAI and Claude, they faced $3,000 monthly bills amid the broader AI cost pressure wave.

This mirrors broader SME trends: AICC's cost efficiency turns AI from an executive luxury into an everyday operational staple accessible to businesses of all sizes.

Emerging setups — like running lean AI pipelines with models such as Kimi K2.5 through aggregated gateways — demonstrate that production-grade AI workflows are achievable at a fraction of the cost traditionally associated with premium providers. The data is clear: smarter model selection and unified API access are the defining cost levers for SMEs in 2026.