🔗 A2A Collaboration

Agent-to-agent communication is exploding per Gartner, enabling complex workflows like supply chain optimization without human intervention across entire enterprise systems.

Autonomous AI agents are going mainstream in 2026 — but premium API costs can cripple SME budgets. This guide shows you how to deploy powerful agentic AI with models like GPT 5.2, GLM-5, and MiniMax 2.5 at 20–80% lower cost via AICC's unified gateway.

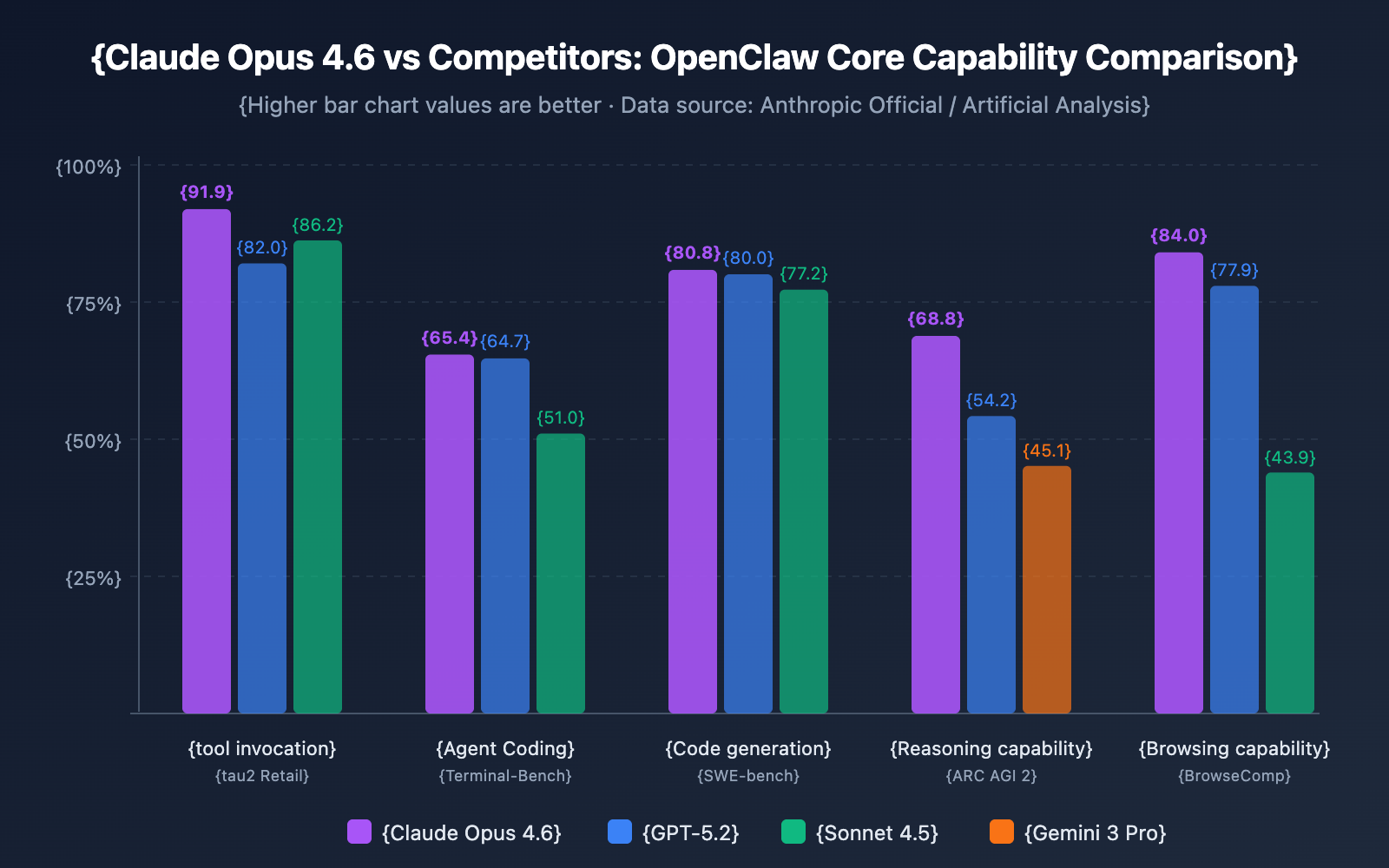

Gartner predicts 80% of enterprises will embed autonomous agents by year's end — yet for SMEs in high-cost areas like Los Angeles, the barrier isn't technology, it's budget. Goldman Sachs forecasts a 6–19% electricity price hike by 2027, indirectly inflating API fees. Building agents using Claude Opus 4.6 or GPT 5.2 can easily rack up thousands in monthly expenses.

The solution lies in Chinese open-source models like GLM-5 and MiniMax 2.5 — hailed by MIT Technology Review as silicon-valley disruptors — combined with AICC's unified "One API" gateway aggregating 300+ models at 20–80% lower cost.

MIT Sloan Management Review marks 2026 as the year AI moves beyond simple Q&A to "agentic" setups handling multi-step processes autonomously — an agent that answers queries, processes orders, updates inventory, and follows up via email without human intervention. Forrester reports early adopters see 25–40% efficiency gains, but only when costs are controlled.

Agent-to-agent communication is exploding per Gartner, enabling complex workflows like supply chain optimization without human intervention across entire enterprise systems.

PixVerse V5.6 (X's #2 trending video generator) allows agents to create personalized product demos by blending text, images, and video without premium markups.

Letta AI's long-term memory features let agents retain context across sessions — dramatically boosting efficiency in customer support and sales workflows.

GLM-5 and MiniMax 2.5 achieve parity with Western counterparts at a fraction of the cost — MIT Tech Review confirms their performance benchmarks for budget-conscious SMEs.

Hardware like ASUS GX10 supports local inference, reducing cloud dependency and shielding SMEs from surging data center power costs.

Agentic workflows amplify token costs through iterative reasoning and multi-tool calls. A simple Claude Opus 4.6 workflow can cost $100/day — here's how every major model compares and where the traps hide.

| Model / Tool | Input (per 1M Tokens) | Output (per 1M Tokens) | Key Features | Hidden Traps | Budget Alternative via AICC |

|---|---|---|---|---|---|

| OpenAI GPT 5.2 | $2.50 | $10.00 | Advanced reasoning, multimodal | High output fees for long chains; rate limits throttle agents | Aggregate with GLM-5 for 50% savings |

| Anthropic Claude Opus 4.6 | $5.00 | $25.00 | Ethical alignment, coding agents | Premium pricing eats budgets; government restrictions add risk | Switch to MiniMax 2.5 equivalent at 80% lower |

| GLM-5 (Chinese Open-Source) | $0.50 | $1.50 | High-performance, scalable | Limited Western integration without gateways | Native low-cost via AICC's One API |

| MiniMax 2.5 | $0.30 | $1.00 | Fast inference, A2A support | Availability in non-China regions | 20–60% bulk discounts through aggregation |

| PixVerse V5.6 (Multimodal) | $3.00 (per video gen) | N/A | Video/text agents | Compute-heavy; power surcharges | Optimized routing saves 30–50% on multimodal calls |

| Letta AI (Memory Tool) | ~$10/month + API | Varies | Long-term agent memory | Add-on costs; over-reliance spikes bills | Integrated with AICC for seamless, low-overhead use |

McKinsey estimates global AI OpEx at $500 billion, with data center power demands growing 40% — costs that trickle directly down to API pricing. AICC's hybrid local/cloud approach (e.g., with ASUS GX10 for edge computing) can slash monthly spends from $5,000 to $1,000.

Deploy a full production agent in under a week for under $500/month. This guide assumes basic Python knowledge — AICC simplifies everything else.

import openai # Compatible with AICC client = openai.OpenAI(base_url="https://api.ai.cc/v1", api_key="your_aicc_key") response = client.chat.completions.create( model="glm-5", messages=[{"role": "user", "content": "Plan a marketing agent workflow"}] )