Seedance 2.0 vs Top AI Video Generators 2026: Kling, Runway, Luma, Sora & Veo Compared

Seedance 2.0 vs Top AI Video Generators 2026: Kling, Runway, Luma, Sora & Veo Compared

As of February 2026, AI video generation has crossed into "cinematic territory." ByteDance’s Seedance 2.0 (launched February 10–12) is the model everyone is talking about — and panicking about. Hollywood studios have already sent cease-and-desist letters over celebrity deepfakes, while creators call it the first model that feels like "directing instead of prompting."

But is Seedance 2.0 actually the best? Or do Kling, Runway, Luma, Sora 2, and Veo 3.1 still win in specific use cases? Here’s the most up-to-date, no-hype comparison based on real benchmarks, creator tests, and platform data in February 2026.

Seedance 2.0 at a Glance (ByteDance)

- Launch: February 10, 2026

- Core strength: Unified multimodal audio-video generation (text + image + video + audio in one pass)

- Max inputs: 9 images + 3 videos (≤15s total) + 3 audio files + text

- Output: 1080p cinematic, native dialogue, lip-sync, sound effects, music, multi-shot storytelling, director-level camera/lighting/performance control

- Access: Limited (China via Jimeng ~$9.60/mo; international platforms like fal.ai, RunDiffusion, Atlas Cloud rolling out now)

- Controversy: Viral Tom Cruise vs Brad Pitt fight scenes triggered MPA, Disney, Netflix, and Paramount cease-and-desist letters

Head-to-Head Comparison Table (February 2026)

| Feature | Seedance 2.0 | Kling 3.0 / 2.6 | Runway Gen-4 / Gen-3 | Luma Ray 2 | Sora 2 | Veo 3.1 |

|---|---|---|---|---|---|---|

| Native Audio + Lip-sync | Excellent (joint gen) | Very Good | Added post-gen | Added post-gen | Good (2026 update) | Excellent |

| Multi-modal Reference | Best (9 img + 3 vid + 3 audio) | Good | Good | Good | Moderate | Good |

| Director Camera Control | Outstanding | Very Good | Excellent (Motion Brush) | Good | Good | Very Good |

| Physics & Motion Realism | Hyper-real | Best-in-class | Strong | Excellent | Strong | Excellent |

| Multi-shot / Storytelling | Native multi-shot | Strong | Strong | Good (extensions) | Best story coherence | Strong |

| Resolution | 1080p cinematic | 1080p | 1080p–4K | Up to 4K | 1080p | 1080p–4K |

| Pricing (approx) | $9–18/mo | $0.10–0.12/sec | Credit-based ~$15+/mo | $20–50/mo | ChatGPT Plus/Pro | Free tier limited |

Seedance 2.0 vs Kling AI: The Chinese Showdown

Both are Chinese powerhouses, but they serve different creators.

Seedance 2.0 wins when you need full multimodal control and native synchronized audio in one generation. It’s the only model where you can feed reference audio + character images + camera instructions and get a complete scene with proper lip-sync and sound design.

Kling 3.0 wins on raw physics and long-form realism. Many testers still prefer Kling for natural human movement and complex action sequences without artifacts.

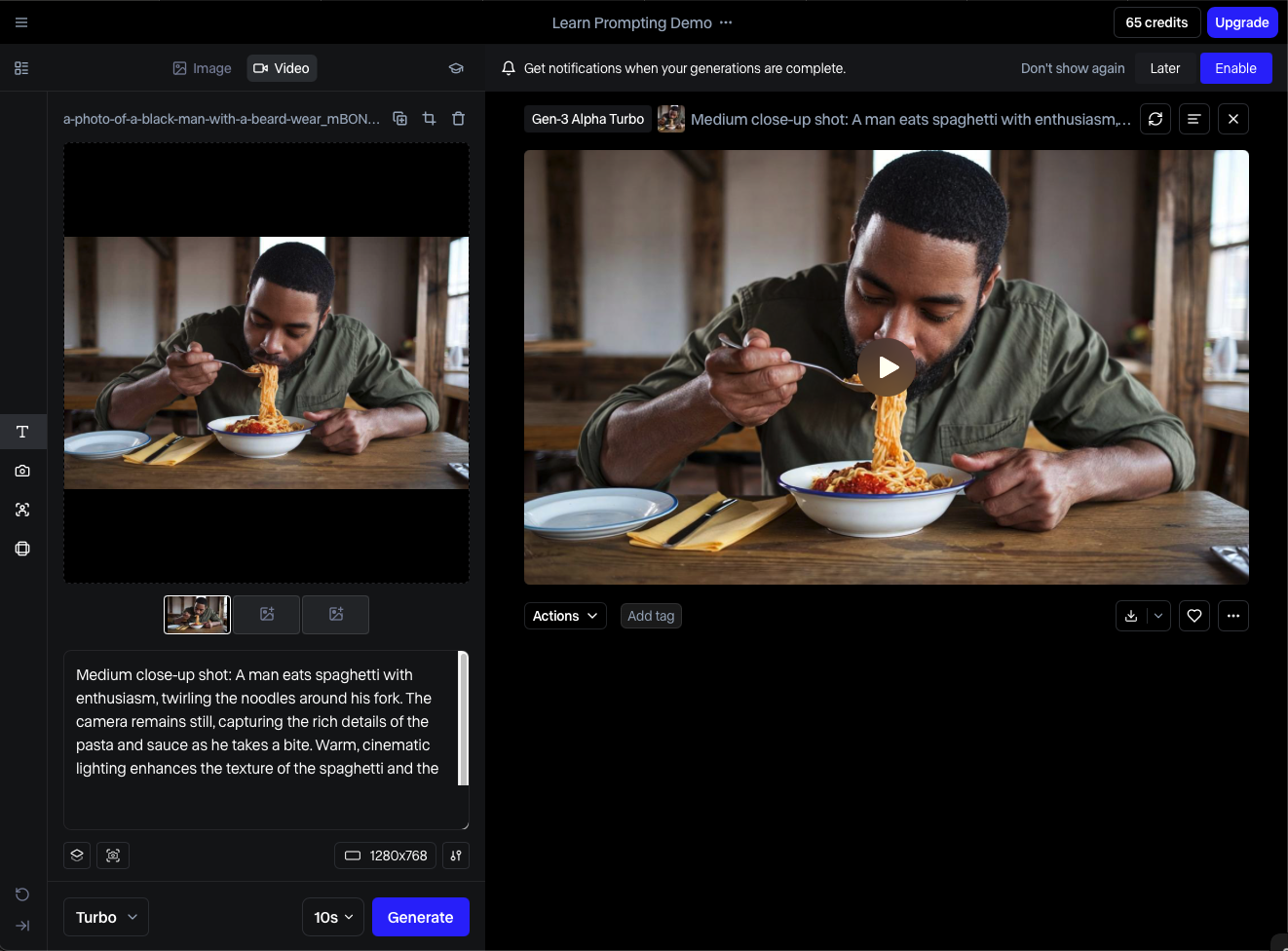

Seedance 2.0 vs Runway Gen-4/Gen-3: Director vs Creative Tool

Runway remains the filmmaker’s favorite for its Motion Brush, precise editing tools, and integration into professional workflows.

Seedance 2.0 feels more "set it and forget it" — you describe the scene like a director and it handles camera moves, lighting, and audio automatically. Runway gives you more granular control if you want to tweak every frame.

Seedance 2.0 vs Luma Ray 2: Physics & Extensions

Luma excels at believable physics and easy video extensions (great for turning a 5-second clip into 30 seconds).

Seedance 2.0 beats it on character consistency, facial expressions, and native audio. If your project needs talking characters or synced sound, Seedance is currently ahead.

Seedance 2.0 vs OpenAI Sora 2 & Google Veo 3.1

Sora 2 still leads in pure narrative coherence — it "understands" story structure better than almost anything else.

Veo 3.1 delivers stunning cinematic quality and is often the most photorealistic in still-frame tests.

However, neither matches Seedance 2.0’s multimodal reference power or native audio-video joint generation yet. Seedance is the first model that truly feels like it was trained to make complete short films, not just clips.

Who Should Use Which Model in 2026?

For director-level control, native audio, and multimodal references (ads, short films, music videos).

For the most realistic human motion and fastest generation.

For professional post-production workflows and creative experimentation.

When you need long extensions and strong physics on a budget.

For story-heavy narrative videos inside the ChatGPT ecosystem.

When maximum photorealism is the priority (and you can get access).

Final Verdict (February 25, 2026)

Seedance 2.0 is currently the most exciting and disruptive model on the market. Its multimodal architecture and native audio capabilities make it the closest thing we have to "press play on a movie idea."

But it’s not perfect — limited availability, ongoing IP controversy, and the fact that Kling and Runway are more mature in certain workflows mean the winner still depends on your exact needs.

The real winner? Creators who use multiple models in one pipeline: Seedance for the core scene + Runway for fine edits + Kling for action shots.

Log in

Log in