Hermes Agent 2026: The Self-Improving Open-Source AI Agent Outpacing OpenClaw

As of April 2026, Hermes Agent from Nous Research has become one of the hottest topics in the AI Agent space. With over 32k GitHub stars, a fresh v0.7.0 “resilience release,” and today’s major partnership announcement with MiniMax AI (powering M2.7 models directly in the agent), developers are migrating from OpenClaw in droves.

Unlike traditional one-shot agents, Hermes Agent is the only open-source agent with a true built-in learning loop. It doesn’t just complete tasks — it remembers, builds reusable skills, and gets smarter every single time you use it.

In this guide, we break down exactly what makes Hermes Agent special, how it compares to OpenClaw, and — most importantly — how to supercharge it using a unified multi-model AI API platform (like ours) for maximum performance, cost efficiency, and flexibility.

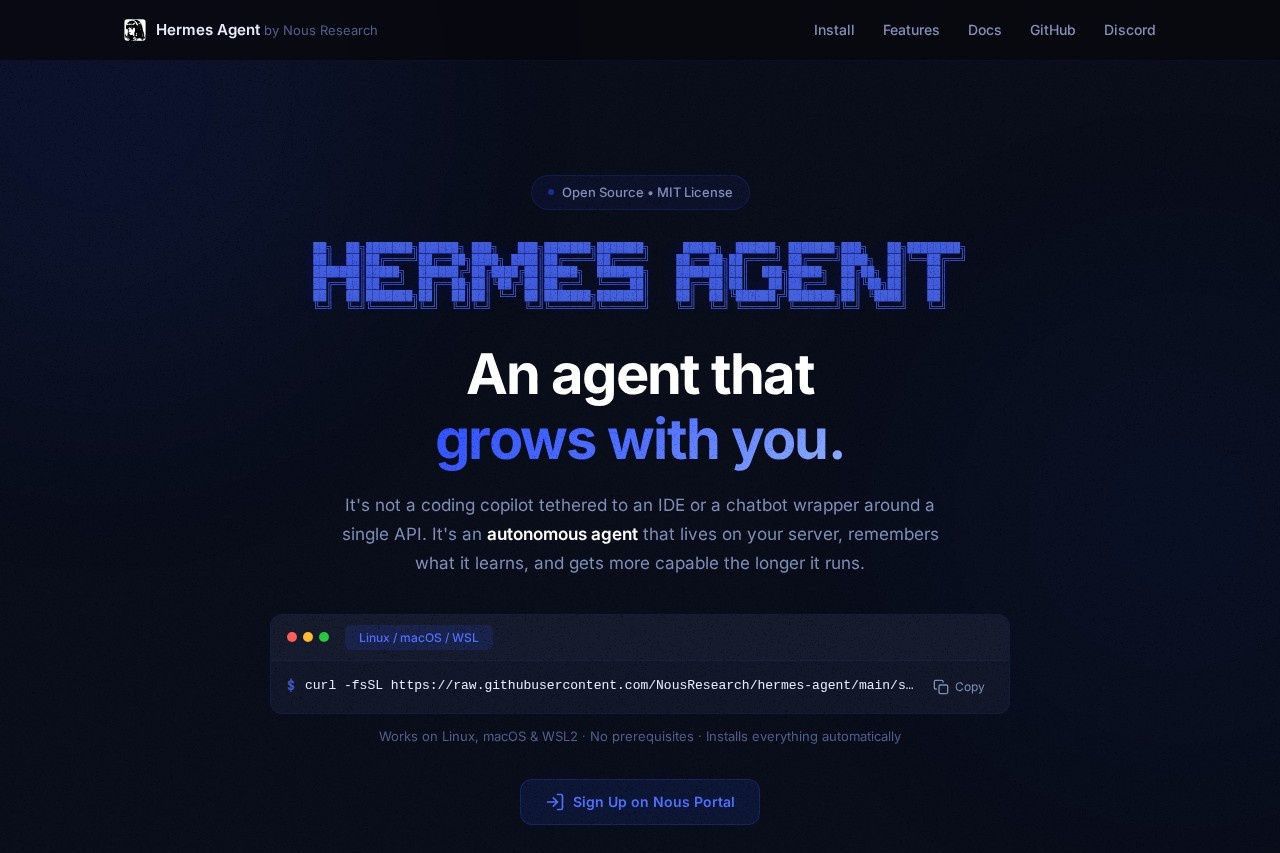

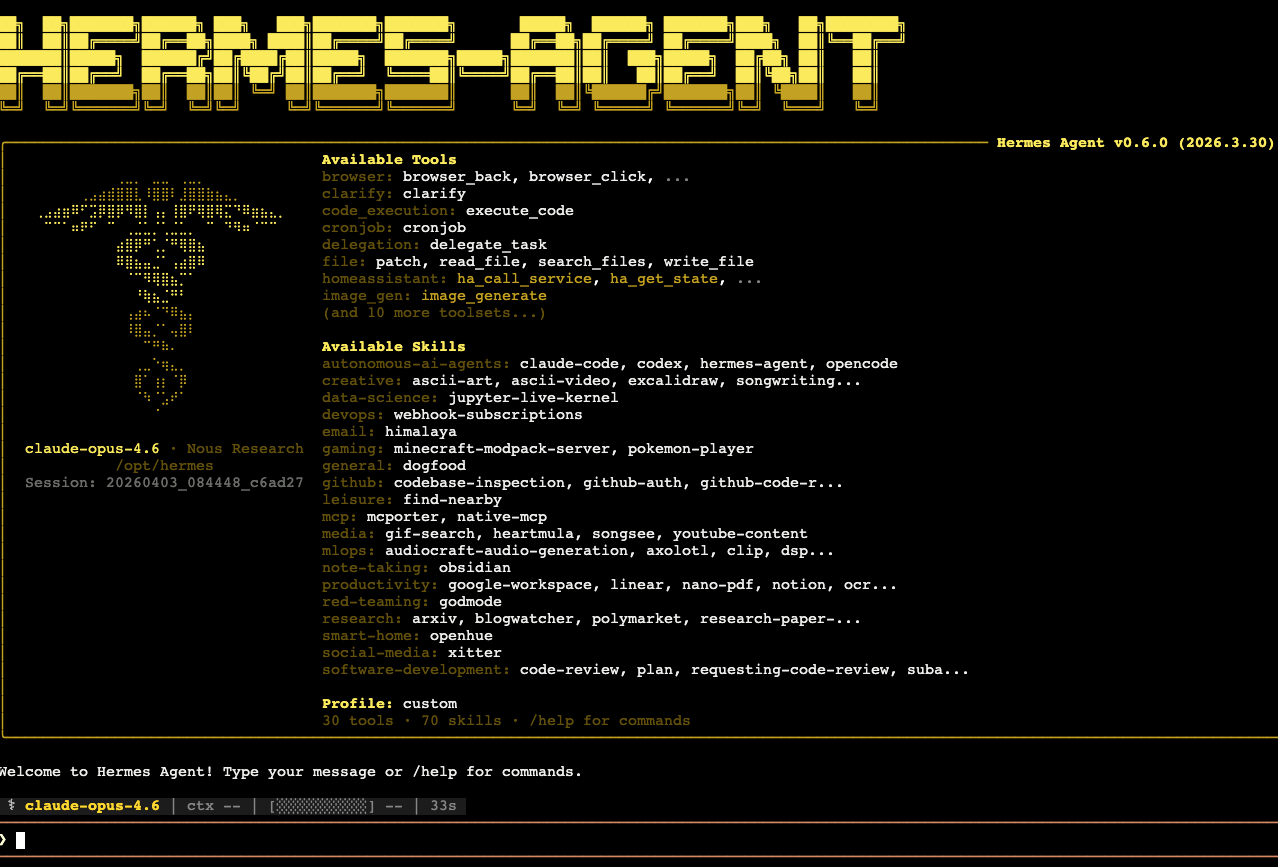

What Is Hermes Agent?

Hermes Agent is a fully open-source (MIT licensed), self-hosted, model-agnostic autonomous AI agent built by Nous Research — the same team behind the legendary Hermes model series.

Key differentiator: It lives on your server (or locally) and evolves with you.

- Runs persistently 24/7

- Maintains multi-level memory across sessions

- Automatically creates, stores, and refines its own “skills” after every complex task

- Supports 400+ models via Nous Portal, local backends (Ollama, vLLM, llama.cpp), and any OpenAI-compatible endpoint

It is not another chatbot wrapper or coding copilot. It’s a true personal AI employee that gets better the longer it runs.

Why Hermes Agent Is Exploding Right Now (April 2026)

- v0.7.0 Resilience Release (April 3, 2026): Pluggable memory providers, credential rotation, Camofox anti-detection browser, inline diffs, and massive stability improvements.

- MiniMax Partnership (announced today): MiniMax M2.7 is now one of the most-used models inside Hermes Agent. Nous Research and MiniMax are collaborating to optimize future releases specifically for the agent.

- Mass Migration from OpenClaw: Reddit, X, and YouTube are filled with “I ditched OpenClaw for Hermes” posts. Developers love that Hermes actually learns instead of relying on static human-written skills.

- Self-evolution ecosystem: Separate repo (hermes-agent-self-evolution) uses DSPy + GEPA to automatically optimize skills and prompts.

The Magic Behind Hermes: Built-in Learning Loop + Persistent Memory

This is where Hermes Agent separates itself from every other agent in 2026.

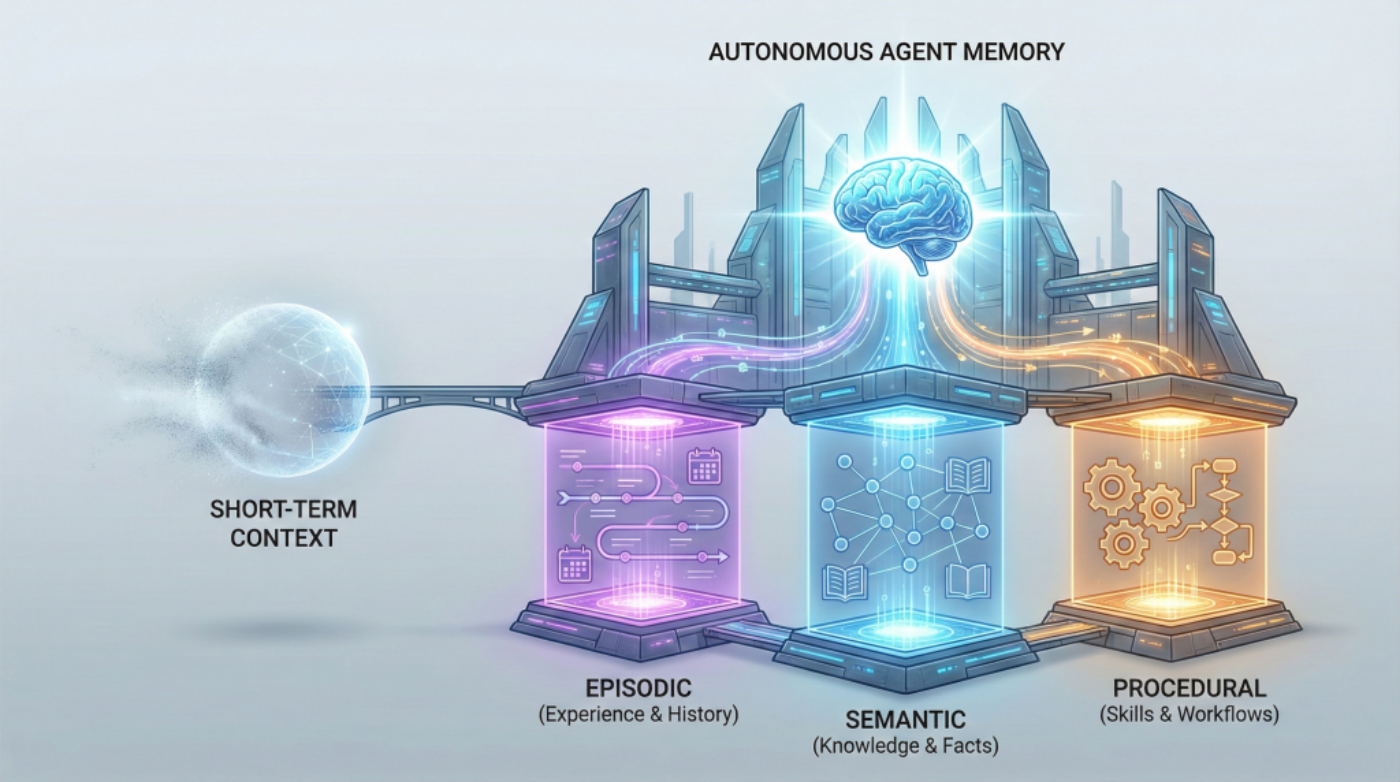

- Observe → Plan → Act → Learn After completing a task, Hermes automatically:

- Analyzes what worked and what didn’t

- Extracts a reusable “skill” (stored as a markdown file with instructions + example code)

- Refines existing skills over time

- Three Layers of Memory

- Short-term context

- Episodic (past experiences)

- Procedural (skills & workflows)

- Skill Documents Instead of bloating prompts with every possible tool, Hermes loads only the relevant skill when needed — dramatically reducing token usage and cost.

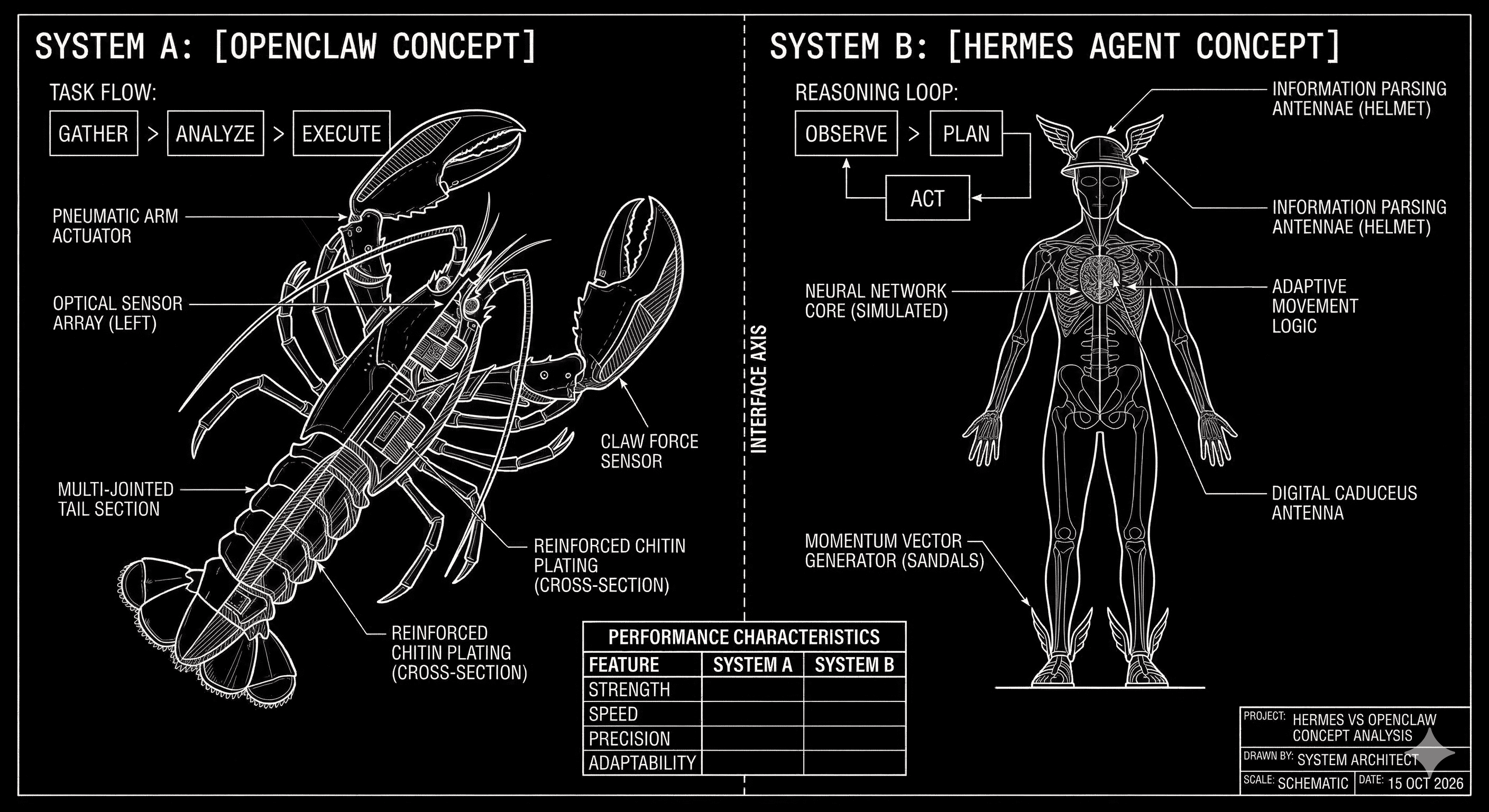

Hermes Agent vs OpenClaw 2026: Head-to-Head

| Feature | Hermes Agent | OpenClaw | Winner |

|---|---|---|---|

| Self-improving skills | Built-in learning loop + auto-refine | Static human-authored skills | Hermes |

| Persistent memory | Multi-level, cross-session | Session-based | Hermes |

| Model support | 400+ (any OpenAI-compatible) | Strong but narrower | Hermes |

| Deployment | Fully self-hosted, local-first | Strong managed/cloud options | Tie |

| Ecosystem & channels | Growing fast | Mature (Slack, Discord, etc.) | OpenClaw |

| Migration path | Official hermes claw migrate tool | — | Hermes |

| Cost efficiency | Dynamic skill loading = lower tokens | Higher context usage | Hermes |

How to Get Started with Hermes Agent in Under 30 Minutes

1. One-command install (Linux/macOS/WSL):

2. Choose your inference provider during setup — this is where our unified AI API platform shines.

3. Run your first session:

4. Give it a complex task (e.g., “Build me a full-stack todo app with authentication and deploy it”). Watch it create reusable skills automatically.

Pro tip: Connect Hermes to our integrated AI API platform as your backend. You get instant access to 100+ frontier and open models, automatic fallback routing, zero-retention privacy options, and usage-based pricing that scales beautifully with persistent agents.

Why Power Hermes Agent with a Unified Multi-Model AI API

Hermes is model-agnostic by design — that’s its superpower. But managing dozens of API keys, dealing with rate limits, and optimizing costs manually is painful.

Our platform solves this:

- One API key → access to GPT, Claude, Gemini, Llama 4, DeepSeek, MiniMax M2.7, Gemma 4, and 300+ more

- Smart model routing based on task complexity and cost

- Built-in moderation, logging, and audit trails (perfect for enterprise)

- Lowest latency + automatic retries

- Pay-per-use with generous free tier for testing agents

Result: Your Hermes Agent becomes truly unstoppable — switching between the best model for coding, research, browser tasks, or creative work without any code changes.

Actionable Next Steps for Developers

- Deploy Hermes Agent today on a cheap VPS or even locally

- Connect it to our unified AI API for production-grade reliability

- Start with simple recurring tasks (cron jobs, research reports, code reviews) and let the learning loop do the rest

- Monitor skill growth with the built-in HUD dashboard or community tools like Hermes HUD

The agents that win in 2026 won’t be the ones with the most tools — they’ll be the ones that improve themselves.

Ready to run the most intelligent open-source agent in the world?

Start building with Hermes Agent + our integrated AI API platform today. One-click model access, zero lock-in, and the infrastructure your self-evolving agent deserves.

Drop your questions in the comments or join our developer community — we’re actively testing Hermes integrations and sharing migration scripts.

Last updated: April 8, 2026 Sources: Official Hermes Agent docs, GitHub releases, Nous Research announcements, and community benchmarks.

Want the full setup script, a ready-to-use Docker compose file, or a follow-up article on advanced self-evolution techniques? Just let me know!

Log in

Log in