OpenAI's Industrial Policy for the Intelligence Age

OpenAI's Industrial Policy for the Intelligence AgeKey Takeaways for API Developers & Compliance in 2026

On April 6, 2026, OpenAI released a bold 13-page policy blueprint titled Industrial Policy for the Intelligence Age: Ideas to Keep People First. Coinciding with rapid advances toward superintelligence, the document outlines ambitious, people-first ideas to address AI-driven job displacement, economic inequality, and societal risks — and carries significant implications for every developer building on AI APIs today.

Sam Altman and OpenAI are pushing for proactive policies as AI approaches superintelligence. (Fortune, March 2026)

Understanding the Proposal: Why OpenAI Is Speaking Up Now

OpenAI's document argues that incremental tweaks to existing regulations won't suffice as AI capabilities scale toward superintelligence. Instead, it calls for a comprehensive "industrial policy" — drawing parallels to the Progressive Era and New Deal responses to earlier technological revolutions.

The central thesis: AI must deliver broad prosperity, not concentrated power. Without deliberate policy, risks include massive job disruption, misuse, misalignment, and erosion of democratic institutions.

- Superintelligence is coming — It will accelerate scientific breakthroughs, lower costs, and boost productivity, but also displace entire job categories overnight.

- Keep people first — Policies should democratize access to AI, share economic gains, and build resilient safeguards.

- Public-private collaboration — OpenAI invites governments, companies, and civil society to a May 2026 workshop in Washington, DC.

"AI must deliver broad prosperity, not concentrated power — without deliberate policy, the risks are structural and irreversible."

Building an Open Economy

The document focuses heavily on mitigating AI's labor market impact and ensuring shared prosperity. Key proposals with direct relevance for developers and enterprises:

- Automated Labor Taxes ("Robot Taxes") — Taxes on AI-driven automation to fund worker transitions, paired with incentives for human-AI collaboration.

- Public Wealth Fund — Governments and AI companies seed a fund that invests in AI growth; returns distributed directly to citizens as an "AI dividend."

- Right to AI — Treating affordable AI access like electricity — expanded infrastructure, education, and subsidies for small businesses and underserved communities.

- Four-Day Workweek Pilots & Efficiency Dividends — Converting AI productivity gains into shorter workweeks, higher retirement contributions, and portable benefits.

- AI-First Entrepreneurship Support — Microgrants, "startup-in-a-box" tools, and training to help displaced workers launch AI-powered businesses.

Left: Concept of automated labor taxation. Right: AI enabling shorter workweeks — an "efficiency dividend" OpenAI advocates.

For API developers: if your application automates repetitive tasks or scales rapidly, expect increased scrutiny around job displacement. Platforms using OpenAI APIs at enterprise scale may soon need to track and report automation metrics for tax or compliance purposes.

Building a Resilient Society

The second pillar shifts to risk management and institutional safeguards — with direct implications for how developers build and deploy AI systems.

- Auditing Regimes — Strengthened independent audits for frontier models, with targeted oversight for high-risk systems while keeping lighter rules for smaller models.

- Incident Reporting & Model-Containment Playbooks — Mandatory reporting of misuse, near-misses, or dangerous leaks to public authorities.

- AI Trust Stack & Provenance — Standards for verifiable AI outputs, signatures, and logging without excessive surveillance.

- Mission-Aligned Corporate Governance — Encouraging public benefit corporation structures for frontier AI companies.

- Guardrails for Government Use & International Cooperation — High safety standards for public-sector AI and global information-sharing networks.

What This Means for API Developers: Five Practical Implications

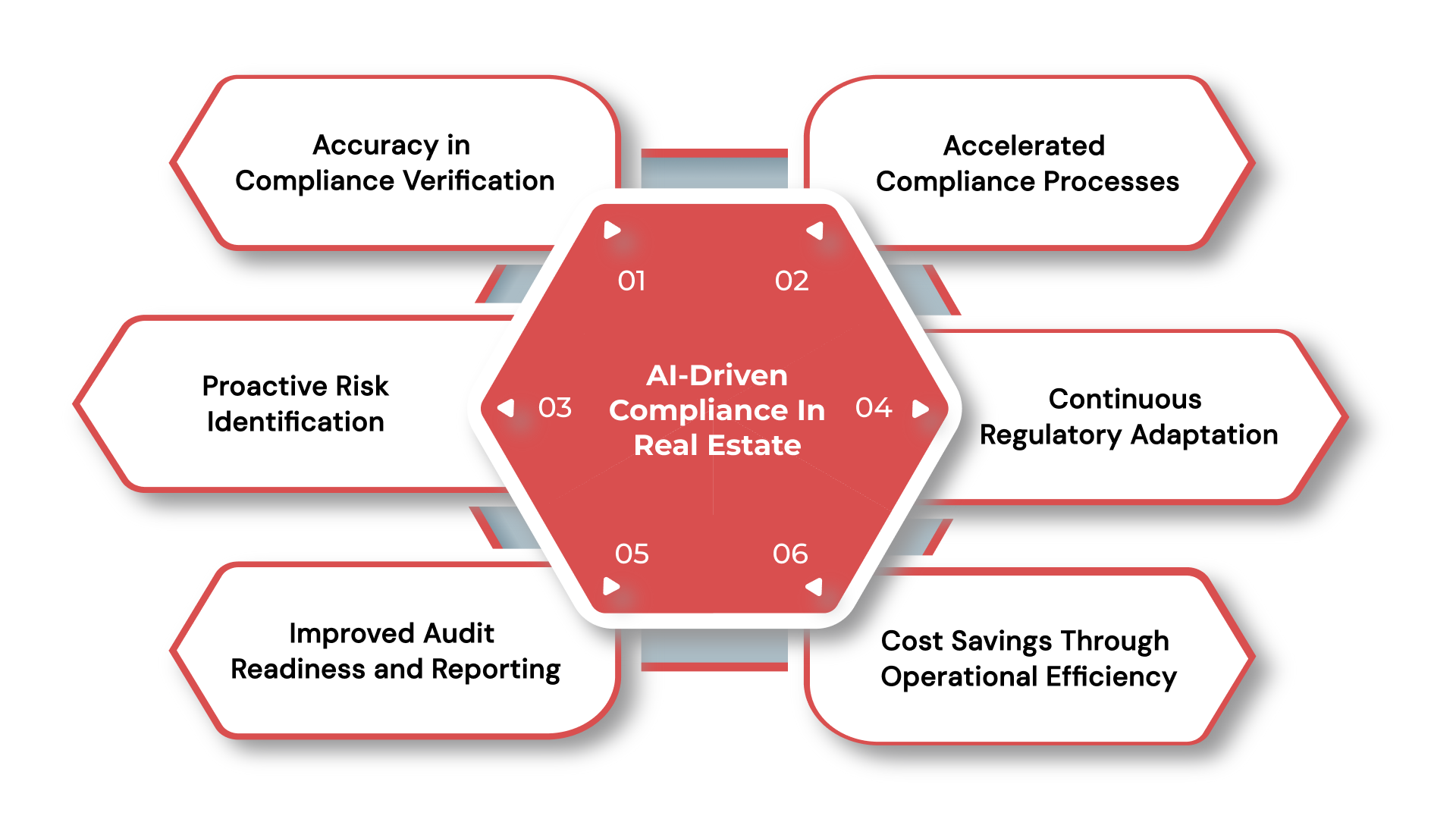

- Compliance Burden Will Increase — Expect new requirements for logging, auditing, and incident disclosure. High-risk applications (healthcare, finance, autonomous agents) may face mandatory risk classifications similar to the EU AI Act.

- Tax and Economic Reporting — Automated labor taxes could translate into usage-based fees or reporting obligations for heavy API consumers. Public wealth fund ideas may encourage companies to voluntarily contribute API credits to public initiatives.

- Product Design Shifts — "Right to AI" and portable benefits favor human-centered tools. Prioritize features that augment rather than replace workers — AI co-pilots with clear provenance and easy human oversight.

- Multi-Model Strategy Becomes Essential — OpenAI is advocating lighter regulation for non-frontier models. Combine OpenAI APIs with lighter, open-source, or regional alternatives to minimize compliance overhead.

- Enterprise Customers Will Demand Proof of Preparedness — Procurement teams will ask: "How does your AI stack align with these emerging policies?" Audit-ready logging and incident response plans become a competitive advantage.

AI compliance checklists are becoming standard — OpenAI's proposals will accelerate this trend significantly.

Actionable Steps: How to Prepare Today

Map every OpenAI API call to potential risk categories. Implement structured logging and provenance tracking now — before it's mandated.

Use OpenAI's moderation endpoints + third-party tools for real-time monitoring. Document decision-making flows for future audits.

Integrate multiple providers via unified APIs. This reduces dependency on any single model's governance trajectory.

Add user-facing explanations, confidence scores, and edit controls. These align with the "AI Trust Stack" vision and improve user trust.

Submit feedback to newindustrialpolicy@openai.com, participate in the May workshop. Early movers gain influence on final rules.

Factor potential "efficiency dividends" or automated labor taxes into your pricing models — especially if your app scales to thousands of users.

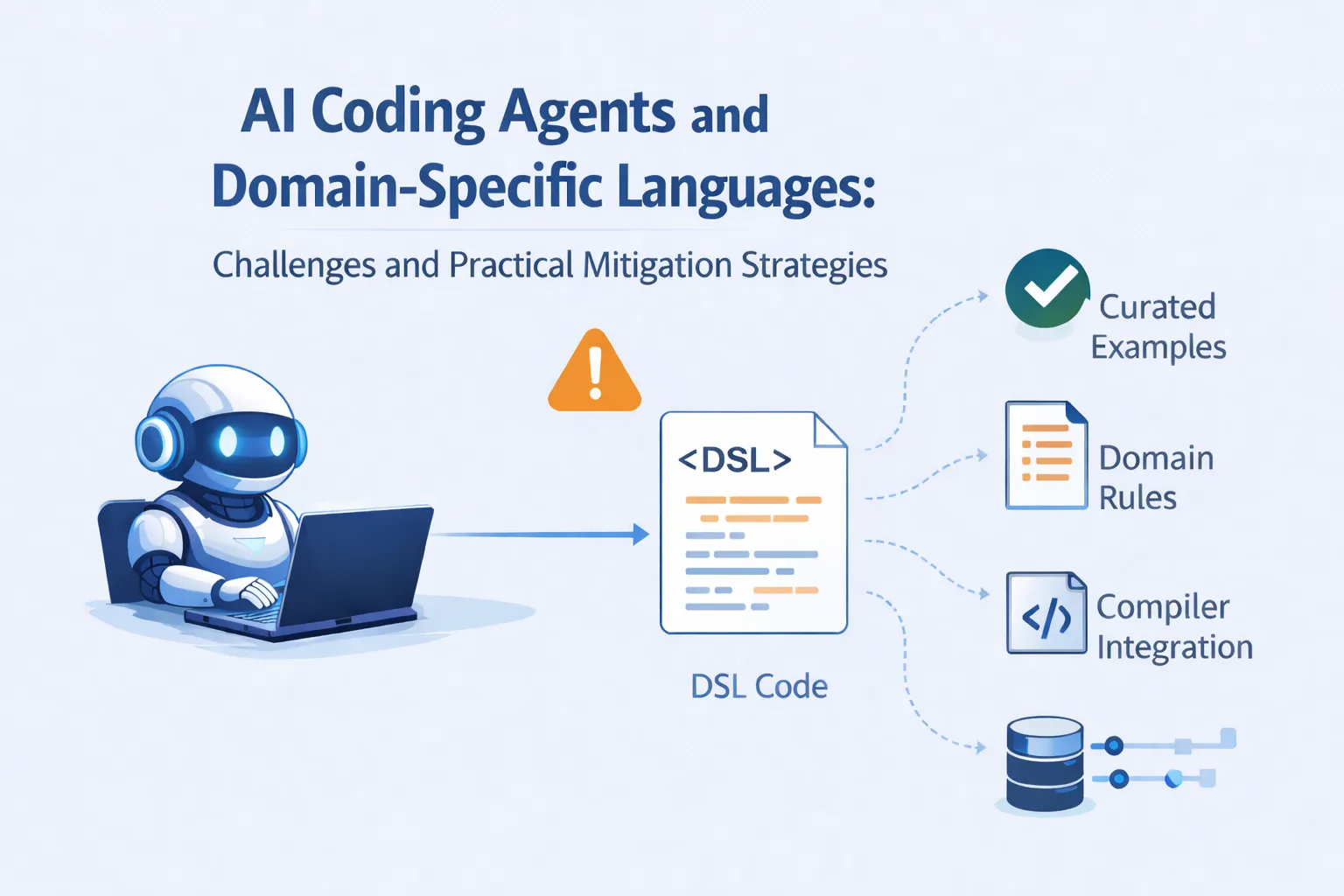

Developers building with AI coding agents and APIs must now factor policy alignment into architecture decisions. (Microsoft Azure Dev Blog)

Proactive Compliance Is Now a Competitive Advantage

OpenAI's Industrial Policy is not binding regulation — yet. But its proposals carry enormous weight. Proactive compliance, human-centric design, and diversified infrastructure are no longer optional. Align your applications with these principles today.

Explore Unified AI API Platform

Log in

Log in