const { OpenAI } = require('openai');

const api = new OpenAI({

baseURL: 'https://api.ai.cc/v1',

apiKey: '',

});

const main = async () => {

const result = await api.chat.completions.create({

model: 'deepseek/deepseek-v4-flash',

messages: [

{

role: 'system',

content: 'You are an AI assistant who knows everything.',

},

{

role: 'user',

content: 'Tell me, why is the sky blue?'

}

],

});

const message = result.choices[0].message.content;

console.log(`Assistant: ${message}`);

};

main();

import os

from openai import OpenAI

client = OpenAI(

base_url="https://api.ai.cc/v1",

api_key="",

)

response = client.chat.completions.create(

model="deepseek/deepseek-v4-flash",

messages=[

{

"role": "system",

"content": "You are an AI assistant who knows everything.",

},

{

"role": "user",

"content": "Tell me, why is the sky blue?"

},

],

)

message = response.choices[0].message.content

print(f"Assistant: {message}")

DeepSeek V4 Flash

A 284B-parameter Mixture-of-Experts model engineered for fast, affordable inference without sacrificing reasoning depth. Thirteen billion parameters active per forward pass. One million tokens of context.

What Is DeepSeek V4 Flash?

DeepSeek V4 Flash is the efficiency-first member of DeepSeek's fourth-generation model family. It sits alongside V4 Pro as a complementary option — where Pro optimizes for maximum intelligence, Flash optimizes for throughput, latency, and cost per token without falling dramatically short on quality.

The model uses a sparse Mixture-of-Experts design: while it carries 284 billion parameters in total, only 13 billion are active during any single inference call. That translates directly into lower compute and lower cost while keeping outputs sharper than a dense 13B model would achieve on its own.

Architecture & Key Innovations

Several architectural decisions separate V4 Flash from earlier DeepSeek releases and from the broader open-source field.

Pre-trained on more than 32 trillion diverse, high-quality tokens. Post-training used a two-stage pipeline: independent cultivation of domain-specific experts via SFT and RL with GRPO, followed by unified model consolidation via on-policy distillation.

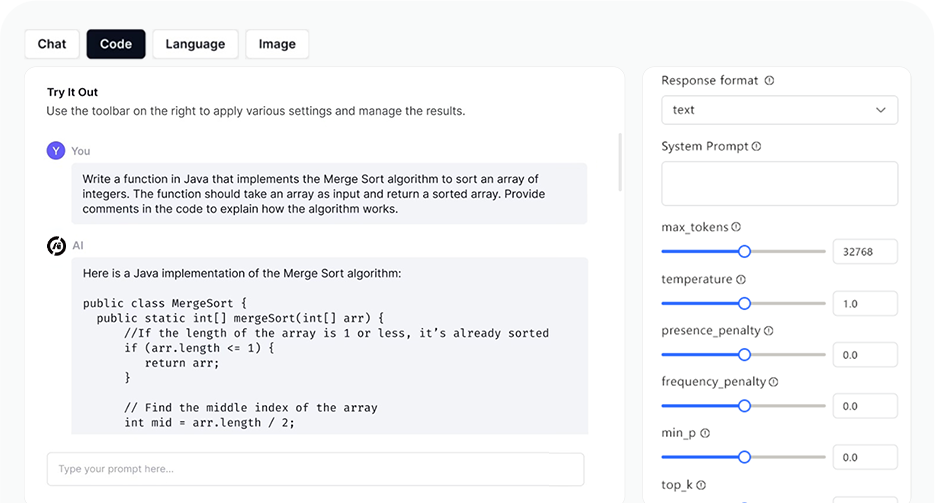

Reasoning Modes

V4 Flash supports three configurable reasoning effort modes — direct control over the latency/quality trade-off without switching models entirely.

Benchmark Performance

On the Artificial Analysis Intelligence Index v4.0 (covering GDPval-AA, GPQA Diamond, HLE, IFBench, SciCode, Terminal-Bench, and others), V4 Flash in reasoning mode scores 47 versus an open-weight median of 28.

Use Cases

V4 Flash is positioned as the cost-effective default for most serving scenarios — the model you reach for first unless maximum frontier intelligence is explicitly required.

- Coding Assist Long-context repo understanding, diff review, and autocomplete at high throughput. 1M-token context absorbs entire medium codebases in a single call.

- RAG Pipelines High-volume retrieval synthesis where cache hits reduce input costs to fractions of a cent. Ideal for document-heavy Q&A production workloads.

- Agentic Multi-step tool-calling loops. Performs on par with V4 Pro on simple agent tasks, at 3–4× lower cost per token.

- Document Processing 1M-token context absorbs entire contracts, codebases, or report archives in a single call — no chunking required.

- Math / STEM Think Max mode produces frontier-level formal reasoning at a fraction of Pro pricing. 95.2 on HMMT 2026 Feb.

- Chat & Support Sub-second TTFT and 84 t/s throughput keep conversational latency imperceptible in real-time applications.

How It Compares

AI Playground

Log in

Log in