const { OpenAI } = require('openai');

const api = new OpenAI({

baseURL: 'https://api.ai.cc/v1',

apiKey: '',

});

const main = async () => {

const result = await api.chat.completions.create({

model: 'deepseek/deepseek-v4-pro',

messages: [

{

role: 'system',

content: 'You are an AI assistant who knows everything.',

},

{

role: 'user',

content: 'Tell me, why is the sky blue?'

}

],

});

const message = result.choices[0].message.content;

console.log(`Assistant: ${message}`);

};

main();

import os

from openai import OpenAI

client = OpenAI(

base_url="https://api.ai.cc/v1",

api_key="",

)

response = client.chat.completions.create(

model="deepseek/deepseek-v4-pro",

messages=[

{

"role": "system",

"content": "You are an AI assistant who knows everything.",

},

{

"role": "user",

"content": "Tell me, why is the sky blue?"

},

],

)

message = response.choices[0].message.content

print(f"Assistant: {message}")

DeepSeek V4 Pro

The largest open-weights model available — 1.6T parameters, 1M context window, 49B active per token. The first architecture to make million-token context economically viable at production scale.

What Is DeepSeek V4 Pro?

DeepSeek V4 Pro is the flagship model from DeepSeek's fourth-generation release. It is the largest open-weights model currently available — larger than Kimi K2.6 at 1.1T and more than twice the size of its predecessor, DeepSeek V3.2 at 685B.

Using a Mixture-of-Experts (MoE) design, V4 Pro activates only 49 billion parameters per token — roughly 3% of its full weight. In the one-million-token context setting, it requires just 27% of the inference FLOPs and 10% of the KV cache size compared with V3.2. Those are not incremental improvements — they represent a step-change in what's economically feasible at production scale.

Three Innovations Behind the Efficiency

Most models label million-token context windows as a marketing feature. At that scale, standard attention is quadratically expensive — memory balloons, inference slows, and costs multiply. DeepSeek solved this with three architectural breakthroughs developed and published before the V4 launch.

Benchmark Results

V4 Pro benchmarks as competitive with top closed-source models across reasoning, coding, and knowledge tasks. On SWE-bench Verified it sits within 0.2 points of Claude Opus 4.6 at roughly one-seventh the output cost.

Reasoning Effort Modes

V4 Pro supports configurable reasoning modes — trade off speed against depth depending on what the task requires, rather than paying for maximum thinking on every call.

Who Should Use DeepSeek V4 Pro?

V4 Pro's 1M context, strong agentic coding performance, and competitive pricing make it suited to a specific class of workloads.

- Full Codebase Load an entire medium-sized repository into context. SOTA on Terminal-Bench and SWE-bench enables cross-file refactoring, bug investigation, and architectural review without truncation.

- Agentic Tasks Multi-step automation, research synthesis, and complex workflow execution where the agent must track state across many turns. Leads open-source on agentic coding benchmarks.

- Math / STEM Beats all current open-weight models on math and STEM benchmarks. Competitive with top closed-source models on GPQA Diamond. Suitable for technical research and scientific reasoning.

- Knowledge RAG Ranks first among open models for world knowledge, trailing only Gemini 3.1 Pro overall. Enterprises building RAG pipelines or document Q&A systems will find V4 Pro's recall noticeably above peer open-source models.

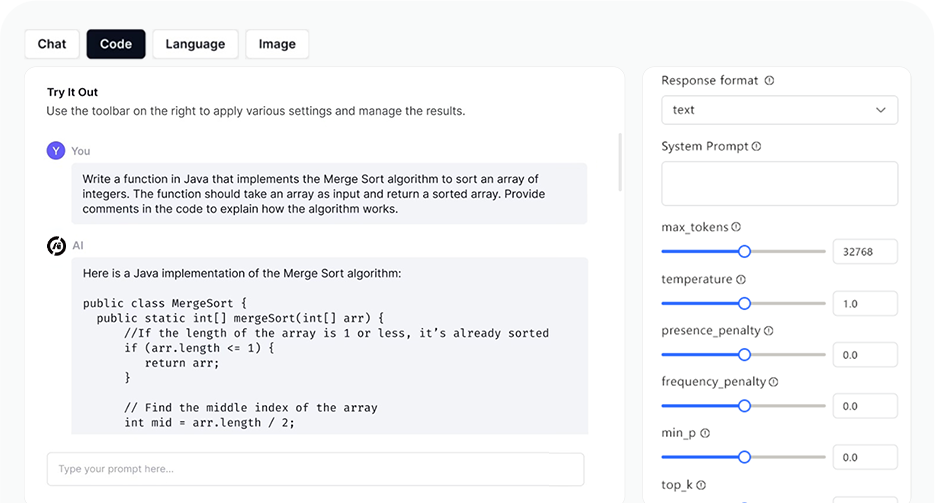

AI Playground

Log in

Log in