const { OpenAI } = require('openai');

const api = new OpenAI({

baseURL: 'https://api.ai.cc/v1',

apiKey: '',

});

const main = async () => {

const result = await api.chat.completions.create({

model: 'minimax/m2-5-20260218',

messages: [

{

role: 'system',

content: 'You are an AI assistant who knows everything.',

},

{

role: 'user',

content: 'Tell me, why is the sky blue?'

}

],

});

const message = result.choices[0].message.content;

console.log(`Assistant: ${message}`);

};

main();

import os

from openai import OpenAI

client = OpenAI(

base_url="https://api.ai.cc/v1",

api_key="",

)

response = client.chat.completions.create(

model="minimax/m2-5-20260218",

messages=[

{

"role": "system",

"content": "You are an AI assistant who knows everything.",

},

{

"role": "user",

"content": "Tell me, why is the sky blue?"

},

],

)

message = response.choices[0].message.content

print(f"Assistant: {message}")

MiniMax-M2.5

MiniMax-M2.5 and MiniMax-M2.5 Highspeed represent a flexible solution for modern AI workloads. Whether your priority is intelligent text generation, conversational automation, or low-latency real-time deployment, this model family delivers production-grade performance with scalable economics.

What Is MiniMax-M2.5?

MiniMax-M2.5 is a general-purpose large language model developed by MiniMax, designed to power a wide spectrum of natural language applications from intelligent chatbots and virtual assistants to automated content generation and document analysis pipelines.

MiniMax-M2.5 API

The flagship general-purpose language model from MiniMax. Delivers superior instruction-following, nuanced reasoning, and high-fidelity content generation. Designed for workloads where response quality and contextual depth are the primary objectives.

- Optimized for quality-first text generation tasks

- Native prompt caching for cost reduction on repeated prompts

- Broad domain knowledge across technical and creative domains

- Extended context window for long-document processing

- Ideal for asynchronous pipelines and batch workloads

- Competitively priced per-token billing for scalable use

Pricing

- Input: $0.39 / 1M tokens

- Output: $1.56 / 1M tokens

MiniMax-M2.5 Highspeed API

A throughput-optimized variant engineered for latency-sensitive applications. Achieves significantly faster time-to-first-token and higher requests-per-second capacity, making it the go-to choice for live user interactions and high-traffic services.

- Ultra-low latency for real-time chat applications

- High-throughput capacity for concurrent request spikes

- Maintains response coherence under extreme load conditions

- Tailored for voice interfaces, streaming UIs, and live agents

- Optimized token streaming for progressive rendering

- Same core intelligence as M2.5, faster delivery pipeline

Pricing

- Input: $0.78 / 1M tokens

- Output: $3.12 / 1M tokens

Built for Every Layer of Your AI Stack

Conversational AI & Chatbots

Power multi-turn, context-aware conversations for customer service, support automation, and virtual assistant platforms with natural, coherent dialogue management.

Content Generation

Automate the creation of articles, marketing copy, product descriptions, social media posts, and long-form editorial content at scale without sacrificing quality.

Document Intelligence

Summarize, classify, extract key information from, and answer questions about contracts, reports, research papers, and enterprise documents using extended context.

AI Agent Workflows

Serve as the reasoning backbone for autonomous agents, enabling complex task decomposition, tool selection, multi-step planning, and iterative self-correction cycles.

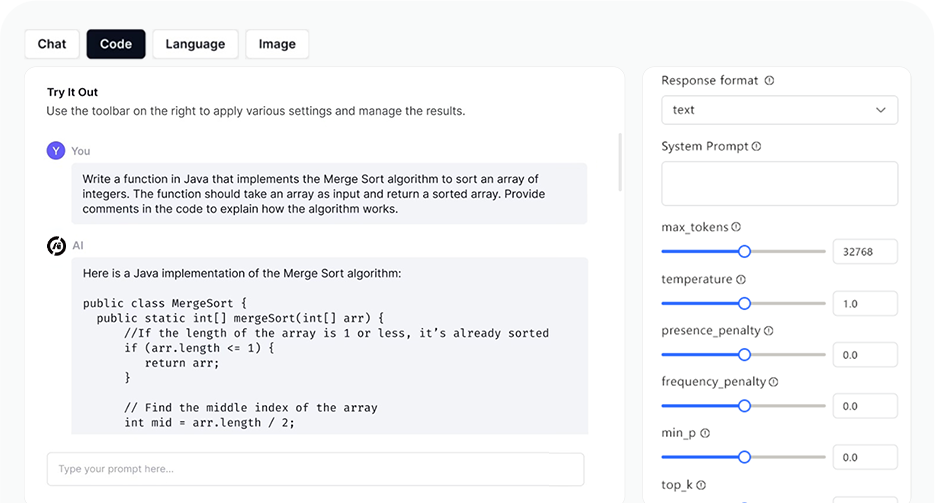

AI Playground

Log in

Log in