What MiniMax M2.7 Is

MiniMax M2.7 is the latest flagship text model, purpose-built for real-world software engineering and complex production workloads. It stands out through its core architecture focused on recursive self-improvement and multi-agent collaboration, delivering exceptional performance in software engineering, debugging, log analysis, code generation, and long-form document creation.

Unlike previous models that excelled mainly at polyglot coding and multi-step reasoning in controlled benchmarks, M2.7 was specifically engineered for live production environments. It brings strong causal reasoning capabilities, the kind needed to understand, diagnose, and fix issues inside actual running systems, not just sandbox tests.

Benchmark Results

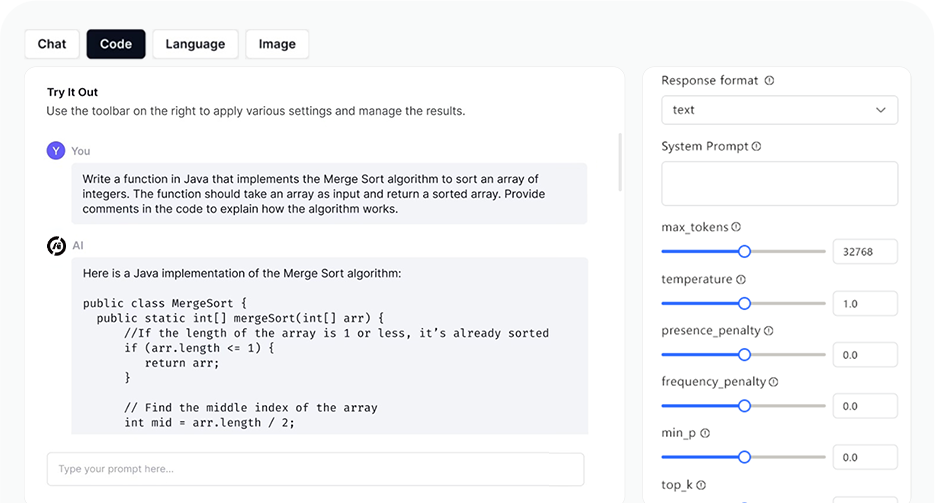

Most benchmark comparisons tell you how a model performs on carefully curated academic tests. The interesting thing about M2.7's numbers is where they come from: production-grade scaffolds, terminal-based engineering challenges, and real document-editing workflows.

Optimization Focus

Software Engineering

Live debugging, root cause analysis, log reading, code security review, and multi-file refactors. Reduction of production incident recovery time to under three minutes has been documented in SRE contexts.

Multi-Agent Coordination

Plans, executes, and refines tasks across dynamic environments through multi-agent collaboration. Can orchestrate sub-agents with distinct roles and communication protocols.

Office Document Generation

End-to-end creation and editing of Word, Excel, and PowerPoint files. Achieves 97% skill adherence on complex multi-round office tasks.

Financial Modeling

Handles structured financial workflows including multi-step spreadsheet logic, data aggregation pipelines, and report generation.

Long-Context Reasoning

204,800-token context window with full automatic cache support. Prompt caching is built-in for repeated or system-prompt-heavy workflows.

High-Speed Variant

Identical output quality at approximately 100 TPS, roughly 3x faster than the base variant, for latency-sensitive applications.

Technical Comparison

M2.7 is not a drop-in replacement for every use case. Where it competes on coding and agent tasks, it's genuinely at the frontier tier.

| Criterion | MiniMax M2.7 | Claude Opus 4.6 | GPT-5 |

|---|---|---|---|

| SWE-Pro (Coding) | 56.2% | ~58% (est.) | ~57% (est.) |

| Input token price | $0.30/M | ~$15/M | ~$10/M |

| Output token price | $1.20/M | ~$75/M | ~$30/M |

| Speed (TPS) | 44–100 | ~30–50 | ~40–80 |

| Open weights | ✓ Available | ✗ No | ✗ No |

| Self-evolving | ✓ Yes | ✗ No | ✗ No |

Who Should Use M2.7?

// 01 DevOps & SRE Teams Building incident response agents that correlate monitoring metrics with code repositories.

// 02 ML Research Infra Teams running experiment pipelines who want an AI that can monitor, debug, and optimize its own scaffolds.

// 03 Doc Automation Organizations generating large volumes of financial reports, legal documents, and data summaries.

// 04 Frontier Startups Startups replacing heavy API costs from Claude Opus or GPT-5 with frontier-level performance.

// 05 Parallel Systems Workloads that need fast parallel inference for large-scale data processing or simulations.

// 06 Framework Devs Backend for harnesses like Claude Code or Kilo Code. 75.8% tool-calling accuracy.

Real-World Scenarios

Multi-agent Game Development

M2.7 was given a brief to build a six-player "Who Am I?" party game. Without any human intervention, the model wrote the server-side game logic, the client-facing web page, and successfully ran the game from start to finish in a single agentic session.

PostgreSQL Production Incident

M2.7 correctly identified the root cause of a performance drop and proposed a fix using PostgreSQL's CONCURRENTLY syntax, understanding the non-blocking requirement without being explicitly told.

Autonomous Kaggle Competition

Participating in three 24-hour trials, M2.7 built training pipelines and iterated decisions independently, producing 9 gold medals and placing narrowly behind Opus 4.6 and GPT-5.4.

Log in

Log in