const main = async () => {

const response = await fetch('https://api.ai.cc/v2/generate/audio', {

method: 'POST',

headers: {

Authorization: 'Bearer ',

'Content-Type': 'application/json',

},

body: JSON.stringify({

model: 'minimax/music-2.6',

prompt: 'A calm and soothing instrumental music with gentle piano and soft strings.',

lyrics: `[Verse]\nStreetlights flicker, the night breeze sighs\nShadows stretch as I walk alone\nAn old coat wraps my silent sorrow\nWandering, longing, where should I go\n[Chorus]\nPushing the wooden door, the aroma spreads\nIn a familiar corner, a stranger gazes back\nWarm lights flicker, memories awaken\nIn this small cafe, I find my way\n[Verse]\nRaindrops tap on the windowpane\nA melody plays, soft and low\nThe clink of cups, the murmur of dreams\nIn this haven, I find my home\n[Chorus]\nPushing the wooden door, the aroma spreads\nIn a familiar corner, a stranger gazes back\nWarm lights flicker, memories awaken\nIn this small cafe, I find my way`,

}),

}).then((res) => res.json());

console.log('Generation:', response);

};

main()

import requests

def main():

response = requests.post('https://api.ai.cc/v2/generate/audio', headers={

'Authorization': 'Bearer ',

'Content-Type': 'application/json',

}, json={

'model': 'minimax/music-2.6',

'prompt': 'A calm and soothing instrumental music with gentle piano and soft strings.',

'lyrics': '''[Verse]

Streetlights flicker, the night breeze sighs

Shadows stretch as I walk alone

An old coat wraps my silent sorrow

Wandering, longing, where should I go

[Chorus]

Pushing the wooden door, the aroma spreads

In a familiar corner, a stranger gazes back

Warm lights flicker, memories awaken

In this small cafe, I find my way

[Verse]

Raindrops tap on the windowpane

A melody plays, soft and low

The clink of cups, the murmur of dreams

In this haven, I find my home

[Chorus]

Pushing the wooden door, the aroma spreads

In a familiar corner, a stranger gazes back

Warm lights flicker, memories awaken

In this small cafe, I find my way''',

}).json()

print('Generation:', response)

if __name__ == '__main__':

main()

MUSIC 2.6

MiniMax Music 2.6 is a next-generation music generation model designed to produce complete, structured songs from text prompts and lyrics.

API Overview

MiniMax Music 2.6 is a text-to-audio model that generates full musical tracks by combining style descriptions, lyrics, and structural instructions. Instead of assembling fragments, it creates end-to-end compositions with intros, verses, choruses, and transitions that follow a defined musical arc.

One of the defining changes in this version is the shift toward intent-aware composition. The model doesn’t just interpret genre tags, it understands how a track should evolve over time, including buildup, tension, and release.

Capabilities

Full-Length Song Generation

Unlike earlier AI music systems that output short loops, Music 2.6 generates complete songs with vocals and instrumentals. A single prompt can result in a track that includes structure, arrangement, and performance dynamics.

Cover Generation & Style Transfer

The Cover feature extracts the melodic core of an existing track and reinterprets it in a new style, transforming folk melodies into electronic tracks while preserving recognizability.

Intelligence

Understanding Musical Progression

Music 2.6 follows temporal progression, not just static prompts. It can start with minimal instrumentation, gradually introduce layers, and build toward a defined climax.

Prompt-Level Direction

Users can describe how a track should evolve—specifying emotional transitions or energy curves—and the model reflects that in the final output.

Balanced Vocal & Instrument Mixing

The model introduces more natural handling of vocals and melody, including subtle imperfections that make tracks feel less mechanical and more human.

Sound Design

Enhanced Low-Frequency Response

Improved handling of bass and low-end frequencies, resulting in tighter drums and clearer sub-bass, essential for electronic and cinematic scoring.

Production-Ready Output

Generated at up to 44.1kHz sample rate and 256kbps bitrate, making it suitable for real-world use rather than just prototyping.

Genre Versatility

From ambient and lo-fi to orchestral and high-energy electronic, maintaining coherence within each specific style.

Feature Breakdown

| Capability | How It Works | Why It Matters |

|---|---|---|

| Structure tags | Define sections inside lyrics | Predictable song composition |

| Cover feature | Extracts melody and reinterprets style | Enables creative remixes |

| Prompt-based control | Describes mood and progression | Fine creative direction |

| Low-end optimization | Improved bass and drums | Better listening experience |

| Auto lyrics | Generates lyrics from prompt | Faster ideation |

Use Cases

Content Creation

Tailored background music for video scenes, avoiding licensing constraints.

Game Audio

Dynamic soundtracks that match gameplay intensity, from exploration to combat.

Personalized Experiences

Playlists and songs tailored to user preferences, mood, or context.

Developer

Agent Integration

Designed to work within agent-based systems, generating music automatically based on context or user behavior logic.

Automation Workflows

Pipelines where music is generated dynamically, adapting soundtracks in real time based on environmental signals or user input.

Performance

Reduced Generation Latency

Significantly reduces the time between prompt and output, allowing for rapid creative iteration.

Fine-Grained Instruction

Parameters like tempo, key, and emotional tone are followed with high precision directly from the prompt.

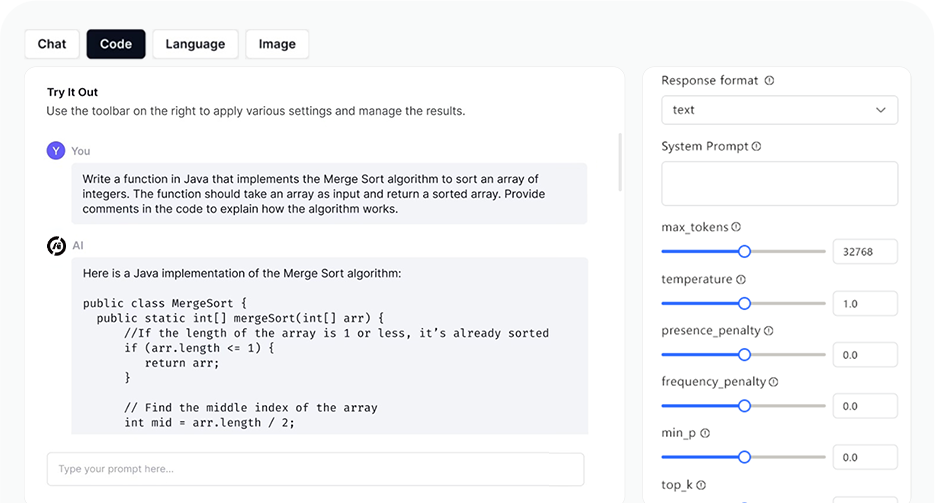

AI Playground

Log in

Log in