How Ai2 Uses Virtual Simulation Data to Develop Advanced Physical AI Systems

Virtual simulation data is accelerating the advancement of physical AI within corporate environments, spearheaded by initiatives such as Ai2’s MolmoBot.

Instructing hardware to interact with the real world has historically depended on costly, manually-collected demonstrations. Most technology providers developing generalist manipulation agents rely on extensive real-world training data for building these systems.

To provide context, projects like DROID gathered 76,000 teleoperated trajectories across 13 institutions — amounting to approximately 350 hours of human labor. Similarly, Google DeepMind’s RT-1 required 130,000 episodes collected over 17 months by human operators. This dependence on proprietary, manual data collection increases research costs and concentrates capabilities within a limited number of well-funded industrial labs.

Ali Farhadi, CEO of Ai2, emphasizes the mission: “Our mission is to build AI that advances science and expands what humanity can discover.” He continues, “Robotics can become a foundational scientific instrument, helping researchers accelerate progress and explore novel questions. To achieve this, systems must generalize effectively to real-world scenarios and provide tools the global research community can collaboratively build upon. Demonstrating successful transfer from simulation to reality is a crucial milestone."

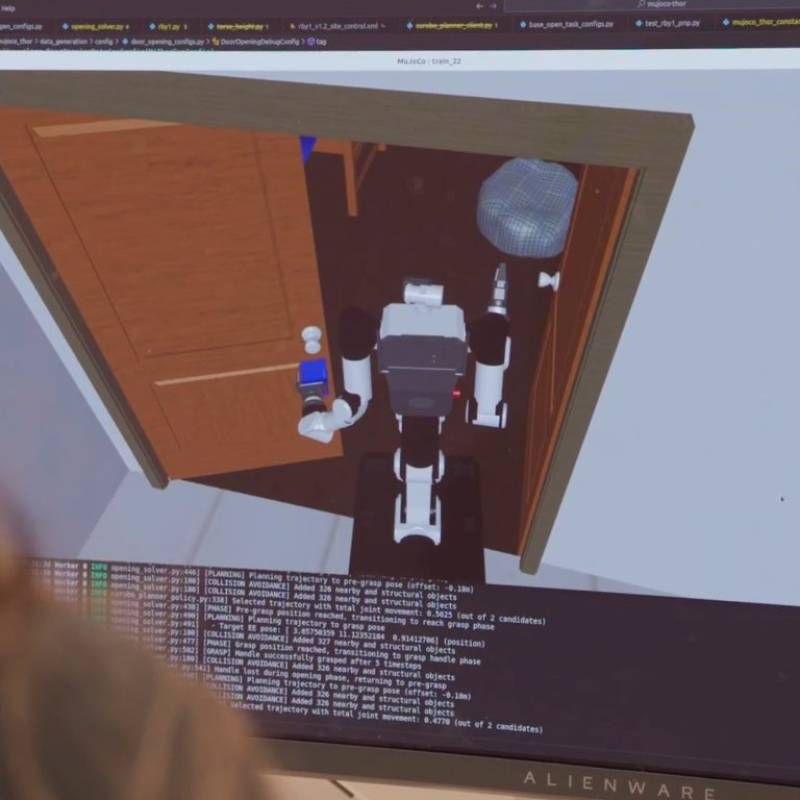

Researchers at the Allen Institute for AI (Ai2) propose a novel economic model through MolmoBot — an open robotic manipulation model suite trained exclusively on synthetic data. By procedurally generating trajectories within a virtual environment named MolmoSpaces, the team effectively eliminates the need for manual teleoperation.

The accompanying dataset, MolmoBot-Data, contains a staggering 1.8 million expert manipulation trajectories. This dataset was produced by coupling the MuJoCo physics engine with robust domain randomisation techniques that vary objects, viewpoints, lighting, and dynamics.

Ranjay Krishna, Director of the PRIOR team at Ai2, explains their innovative approach: “Most methods attempt to reduce the sim-to-real gap by adding more real-world data. Instead, we bet that increasing the diversity of simulated environments, objects, and camera viewpoints dramatically shrinks this gap.” This advancement shifts robotic constraints from manual demonstration collection to the design of more effective virtual worlds—a challenge solvable with current technology.

High-Volume Simulation for Physical AI Training

The training pipeline utilized 100 Nvidia A100 GPUs, achieving about 1,024 episodes per GPU-hour. This translates to over 130 hours of robot experience for every hour of real time.

Compared to traditional real-world data collection, this method produces nearly four times the data throughput, dramatically improving project ROI by shortening deployment cycles.

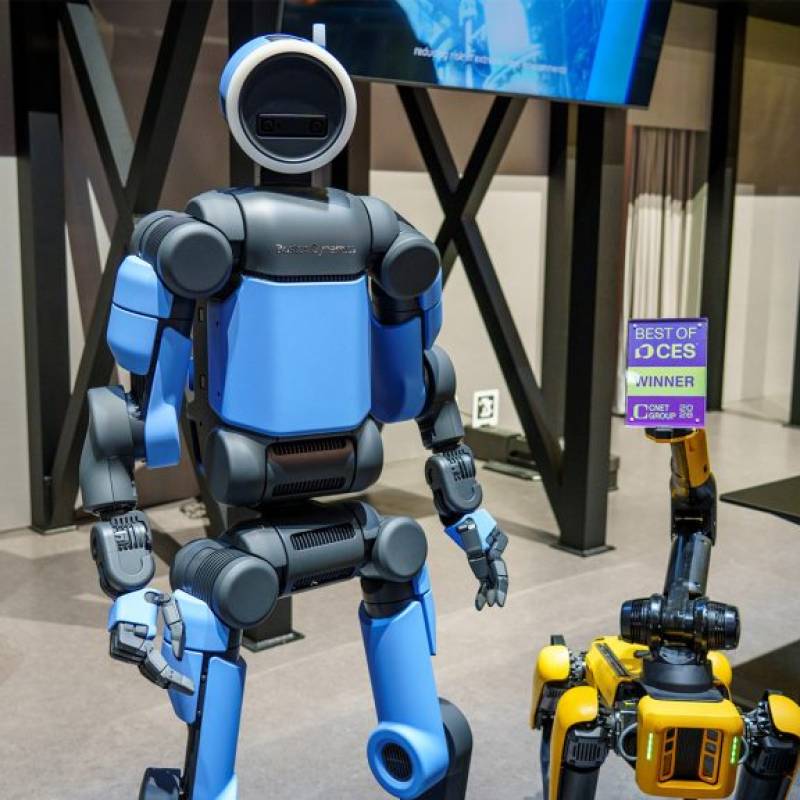

MolmoBot Suite & Hardware Compatibility

The MolmoBot suite features three distinct policy classes assessed on two platforms:

- Rainbow Robotics RB-Y1 mobile manipulator

- Franka FR3 tabletop robotic arm

The primary model uses a Molmo2 vision-language backbone which integrates multiple RGB image timesteps and natural language instructions to govern robotic actions effectively.

Optimized Models for Edge Environments

For edge computing scenarios with limited resources, Ai2 provides:

- MolmoBot-SPOC: a lightweight transformer policy featuring reduced parameter counts.

- MolmoBot-Pi0: built on the PaliGemma backbone to align with Physical Intelligence’s π0 model for direct performance comparisons.

Log in

Log in