AI System Incident Response: Preparation and Remediation Guide

While AI technology offers tremendous opportunities, it also carries inherent risks of malfunction and security compromise. Recent research from ISACA reveals a concerning gap in organizational preparedness: the majority of surveyed organizations cannot determine how quickly they could halt an AI system emergency or identify the root cause of critical incidents.

📊 Key Finding: According to ISACA's report, 59% of digital trust professionals lack understanding of how rapidly their organization could interrupt and halt an AI system during a security incident. Only 21% reported the capability to intervene meaningfully within 30 minutes.

This data reveals a troubling landscape where compromised AI systems may continue operating without oversight, potentially causing irreversible damage to business operations and stakeholder trust.

Ali Sarrafi, CEO & Founder of Kovant, an autonomous enterprise platform, commented: "ISACA's findings point to a major structural issue in the way that organizations are deploying AI. Systems are being embedded into critical workflows without the governance layer needed to supervise and audit their actions. If a business cannot quickly halt an AI system, explain its behavior, or even identify who is to be held accountable, the business is not in control of that system."

⚠️ AI Failures and Critical Risk Factors

The research uncovered several alarming statistics regarding AI incident management capabilities:

- Only 42% of respondents expressed confidence in their organization's ability to analyze and clarify serious AI incidents, creating potential operational failures and security vulnerabilities

- Without proper incident explanation to regulators and leadership, businesses face legal penalties and public backlash

- Lack of proper analysis prevents learning from mistakes, increasing the likelihood of repeated incidents

🚨 Accountability Gap: 20% of respondents reported not knowing who would be responsible if an AI system caused damage. Only 38% identified the Board or an Executive as ultimately responsible.

Effective AI governance remains a critical missing component in most organizational structures. The absence of clear accountability frameworks and incident response protocols creates substantial operational and reputational risks.

🔧 Rethinking AI Management Architecture

Sarrafi emphasized that slowing AI adoption is not the solution—rethinking management approaches is essential. He stated:

"AI systems need to sit in a structured management layer that treats them as digital employees, with clear ownership, defined escalation paths, and the ability to be paused or overridden instantly when risk thresholds are crossed. This way, agents stop being mysterious bots and become systems you can inspect and trust."

Key governance principles include:

- 🔹 Treating AI systems as digital employees with clear ownership structures

- 🔹 Establishing defined escalation paths for incident management

- 🔹 Implementing instant pause/override capabilities when risk thresholds are exceeded

- 🔹 Building governance into architecture from day one, not as an afterthought

As AI becomes increasingly embedded in core business functions, governance cannot be an afterthought—it must be designed into every architectural level with comprehensive visibility and control mechanisms.

✅ Current Human Oversight Practices

The research provided some reassurance regarding human involvement:

- 40% of respondents reported that humans approve almost all AI actions before deployment

- An additional 26% evaluate AI outcomes post-execution

However, without improved governance infrastructure, human oversight alone is insufficient to identify and resolve issues before they escalate into critical incidents.

🔍 Organizational Blind Spots and Disclosure Gaps

ISACA's findings highlight significant structural issues in AI deployment across various sectors:

- Over one-third of organizations do not require employees to disclose where and when AI is used in work products

- This lack of transparency increases the potential for dangerous blind spots in AI system monitoring

- Despite stringent regulations making senior leadership more accountable, organizations continue to fail in implementing safe and effective AI practices

Many businesses mistakenly treat AI risk as purely a technical problem, rather than recognizing it as an organizational management challenge requiring comprehensive oversight.

💡 The Path Forward: Essential Changes Required

Fundamental changes in AI integration and action management are essential for organizational success:

🎯 Critical Requirements:

- Implement proper governance frameworks with clear accountability structures

- Establish comprehensive visibility into AI system operations

- Create rapid response protocols for AI incidents

- Mandate AI usage disclosure across all work products

- Build control mechanisms into AI architecture from inception

Without proper governance and accountability, businesses lack true control over their AI systems. This absence of control means even minor errors could escalate into reputational and financial damage from which many organizations may never recover.

Organizations that successfully implement robust AI governance frameworks will not only reduce risk—they will become the leaders capable of confidently scaling AI throughout their business operations.

Image by Foundry Co from Pixabay

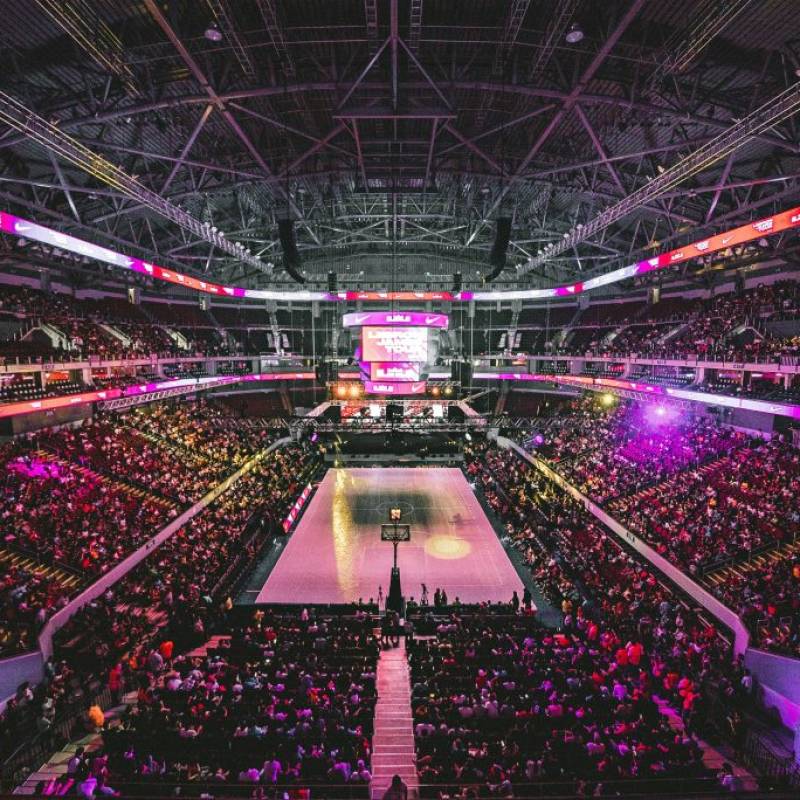

📚 Want to learn more about AI and big data from industry leaders?

Check out AI & Big Data Expo taking place in Amsterdam, California, and London. The comprehensive event is part of TechEx and co-located with other leading technology events. Click here for more information.

AI News is powered by TechForge Media. Explore other upcoming enterprise technology events and webinars here.

Log in

Log in