const fs = require('fs');

const path = require('path');

const axios = require('axios').default;

const api = new axios.create({

baseURL: 'https://api.ai.cc/v1',

headers: { Authorization: 'Bearer ' },

});

const main = async () => {

const response = await api.post(

'/tts',

{

model: 'minimax/speech-2.8-turbo',

text: 'Hi! What are you doing today?',

voice_setting: {

voice_id: 'Wise_Woman'

}

},

{ responseType: 'stream' },

);

const dist = path.resolve(__dirname, './audio.wav');

const writeStream = fs.createWriteStream(dist);

response.data.pipe(writeStream);

writeStream.on('close', () => console.log('Audio saved to:', dist));

};

main();

const fs = require('fs');

const path = require('path');

const axios = require('axios').default;

const api = new axios.create({

baseURL: 'https://api.ai.cc/v1',

headers: { Authorization: 'Bearer ' },

});

const main = async () => {

const response = await api.post(

'/tts',

{

model: 'minimax/speech-2.8-turbo',

text: 'Hi! What are you doing today?',

voice_setting: {

voice_id: 'Wise_Woman'

}

},

{ responseType: 'stream' },

);

const dist = path.resolve(__dirname, './audio.wav');

const writeStream = fs.createWriteStream(dist);

response.data.pipe(writeStream);

writeStream.on('close', () => console.log('Audio saved to:', dist));

};

main();

Speech 2.8 Turbo

MiniMax Speech 2.8 Turbo is a fast, highly responsive text-to-speech model built for applications where timing matters as much as quality.

What Is Speech 2.8 Turbo API?

MiniMax Speech 2.8 Turbo is a performance-optimized version of the Speech 2.8 model family. Instead of pushing maximum audio fidelity, it prioritizes speed, responsiveness, and stability under load. The result is a model that feels fluid in real-time interactions while still maintaining a convincing level of vocal realism.

Under the hood, it relies on a Transformer-based architecture with a speaker representation layer, allowing it to generate consistent, identity-driven voices and adapt quickly to different speaking styles. This structure also enables zero-shot voice cloning, where a short audio sample is enough to approximate a new voice.

Performance & Architecture

Core Capabilities

Natural and Continuous Speech

The model is designed to sound natural without slowing systems down. Speech output feels continuous and well-paced, avoiding the robotic cadence typical of older TTS systems. Emotional tone is not an afterthoughtit , can be shaped deliberately, giving the output a sense of intent rather than neutrality.

Zero-Shot Voice Cloning

Voice cloning works without lengthy setup. A short reference clip can be enough to reproduce tone, rhythm, and general vocal character, which is especially useful when consistency across sessions or characters is required.

Multilingual Coverage

Language support extends across dozens of languages and dialects, making the model suitable for products that operate across regions. Instead of treating localization as a separate layer, speech generation can remain unified across different markets.

Control and Customization

MiniMax Speech 2.8 Turbo gives developers precise control over how speech is delivered. Parameters such as speed, pitch, and volume can be adjusted in a predictable way, allowing teams to fine-tune output to match a product’s tone or UX requirements.

Emotion can also be guided directly. Rather than relying on implicit tone, the model supports intentional delivery styles, which is particularly useful in storytelling, guided experiences, or branded voice interactions.

Audio output can be configured in standard formats such as WAV or MP3, with flexibility around sampling and encoding. This makes it easier to integrate the model into different pipelines without additional processing layers.

Naturalness and Expressive Detail

One of the more noticeable strengths of the Turbo variant is how it handles small, human-like details. Subtle pauses, shifts in emphasis, and non-verbal cues can be incorporated into speech, helping the output feel less synthetic.

This becomes especially important in conversational systems. When responses include variation in pacing or tone, interactions feel less scripted and more adaptive. Over time, this has a measurable impact on perceived quality, even if raw audio fidelity is not at its absolute peak.

API Pricing

- $78 per 1M characters

Performance Profile

MiniMax Speech 2.8 Turbo is built for environments where latency directly affects user experience. Response times are kept low enough to support live conversations, while throughput remains stable under concurrent usage.

Compared to higher-fidelity variants, the trade-off is deliberate. Instead of maximizing nuance in long-form narration, the model focuses on maintaining consistent speed and responsiveness across repeated calls and real-time sessions.

Turbo vs HD

The difference between Turbo and HD comes down to priorities. The HD version leans toward richer tonal depth and is better suited for long-form narration, where subtle emotional gradients matter more than speed.

Turbo, on the other hand, is optimized for immediacy. It performs best in systems where responses must feel instant — voice assistants, live chat interfaces, or interactive agents. In these cases, a slight reduction in audio richness is often outweighed by a smoother, faster experience.

Use Cases

Voice Assistants and Conversational Systems

MiniMax Speech 2.8 Turbo fits naturally into products that rely on continuous interaction. Voice assistants benefit from reduced response lag, making conversations feel more fluid and responsive, especially in real-time dialogue scenarios.

Interactive Applications and Games

Interactive environments, including games and virtual worlds, can use the model to generate character dialogue dynamically. This allows conversations to unfold in real time without breaking immersion or relying on pre-recorded voice lines.

Scalable Content and Localization

The model also performs well in large-scale voice generation tasks such as video narration or multilingual content production. It is particularly effective in workflows where speed and turnaround time are more important than studio-level audio refinement.

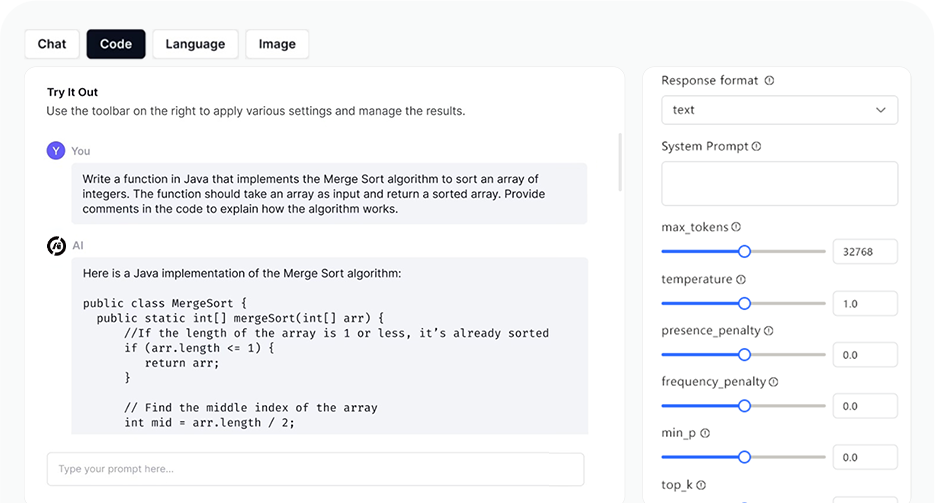

Developer Experience

Integration is straightforward and predictable. The model accepts text input, applies voice and style parameters, and returns audio output with minimal overhead. It supports both synchronous and streaming workflows, which allows developers to choose between immediate playback and progressive audio delivery.

Because the model is stateless by design, it can be scaled across distributed systems without complex session management. This simplifies deployment in modern architectures where concurrency and reliability are key concerns.

AI Playground

Log in

Log in