const { OpenAI } = require('openai');

const api = new OpenAI({

baseURL: 'https://api.ai.cc/v1',

apiKey: '',

});

const main = async () => {

const result = await api.chat.completions.create({

model: 'minimax/m2-1',

messages: [

{

role: 'system',

content: 'You are an AI assistant who knows everything.',

},

{

role: 'user',

content: 'Tell me, why is the sky blue?'

}

],

});

const message = result.choices[0].message.content;

console.log(`Assistant: ${message}`);

};

main();

import os

from openai import OpenAI

client = OpenAI(

base_url="https://api.ai.cc/v1",

api_key="",

)

response = client.chat.completions.create(

model="minimax/m2-1",

messages=[

{

"role": "system",

"content": "You are an AI assistant who knows everything.",

},

{

"role": "user",

"content": "Tell me, why is the sky blue?"

},

],

)

message = response.choices[0].message.content

print(f"Assistant: {message}")

MiniMax-M2.1

Lightweight. Code-Optimized. Agentic-Ready.

Multilingual Code Generation & Refactoring AI Model

MiniMax-M2.1 is a cutting-edge large language model built for high-performance code generation, refactoring, and cross-language reasoning. Optimized for real-world developer workflows, it supports languages such as Rust, Java, Go, C++, TypeScript, and JavaScript, offering fast, clean, and reliable outputs.

Technical Specifications

- Model type: Multilingual Transformer-based LLM

- Architecture: Hybrid dense-attention model with optimized code tokenization

- Context window: 204,800 tokens (input + output)

- Supported languages: Rust, Go, Java, C++, TypeScript, JavaScript, Python, SQL

Performance Benchmarks

Evaluated using rigorous internal frameworks (e.g., OctoCodingbench, SWE Review), with results averaged over 4 runs.

API Pricing

- Input: $0.39 / 1M tokens

- Output: $1.56 / 1M tokens

Key Features

- Multilingual Coding Mastery: Excels across 6+ major programming languages with syntax-aware generation and refactoring

- Agentic Reasoning: Maintains coherent reasoning between turns, critical for tool use, IDE integration, and long-horizon tasks

- Concise & Clean Outputs: Minimizes verbosity while preserving functional correctness and style consistency

- Real-Time Developer Workflows: Optimized for low latency and high throughput in coding assistants and CI/CD pipelines

- Open & Deployable: Fully open-source weights enable on-prem, edge, or custom deployment scenarios

Core Use Cases

- Cross-Language Code Migration: Seamlessly rewrite applications between Rust, Go, and JavaScript without losing logic integrity.

- Code Review & Refactoring: Automate code readability enhancements, style consistency, and optimization opportunities.

- Automated Documentation: Generate aligned docstrings, inline comments, and technical documentation for complex repositories.

- Intelligent Debugging: Detect potential bugs and suggest fixes within a single inference cycle.

- Developer Tool Integration: Connect via SDKs or APIs to augment IDEs such as VSCode, JetBrains, or Neovim with real-time AI assistance.

Model Comparison

vs. Claude Sonnet 4.5: M2.1 matches or exceeds Sonnet 4.5 in coding-specific benchmarks while using far fewer activated parameters. Offers significantly lower inference cost and latency, making it ideal for high-throughput coding agents.

vs. DeepSeek-Coder: M2.1 demonstrates stronger instruction following in complex, multi-step coding scenarios (e.g., full-stack feature implementation). Excels in real-world tool integration and stateful reasoning, critical for IDE plugins and autonomous agents.

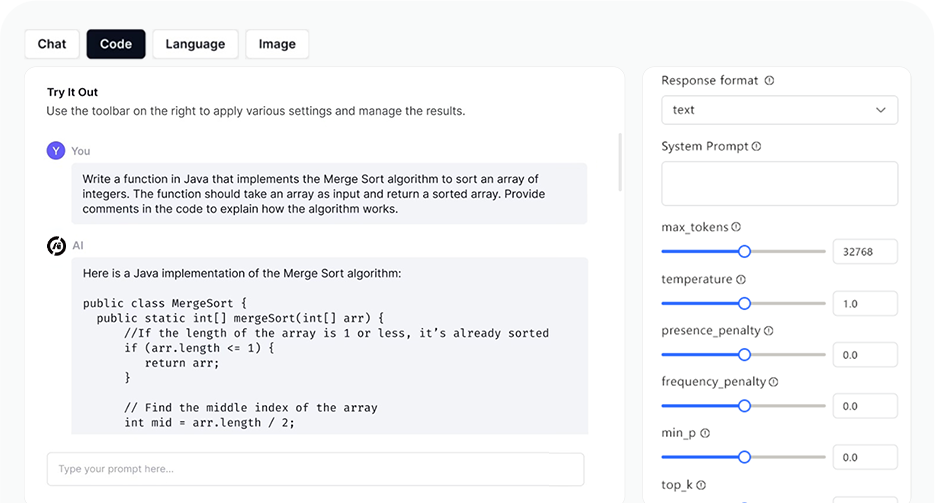

AI Playground

Log in

Log in