Complete Guide to APIs, MCPs, and MCP Gateways: What They Are and How They Work

APIs and MCPs are frequently discussed together as methods for systems to exchange information, yet they are fundamentally designed with different architectures and serve distinct purposes. This comprehensive guide clarifies the key differences and provides essential insights for software developers and users on how to effectively interact with each technology.

🔍 Understanding the Core Distinction

An API (Application Programming Interface) is predominantly utilized in traditional software applications, whereas an MCP (Model Context Protocol) is specifically engineered for large language models. APIs facilitate communication between applications, while MCPs enable AI models to access data and tools in highly structured, intelligent ways. The fundamental difference emerges from the fact that LLMs must dynamically select which tools and information sources they require based on user requests to achieve optimal outcomes.

📡 APIs: Detailed Definition and Functionality

An API transmits requests in a predefined, standardized format to another software instance and receives responses in the same agreed-upon format. The protocols governing each exchange—including methods of behavior and data structures—are hard-coded into the system. Developers write specific code to invoke API calls and create corresponding code to parse and handle the responses appropriately.

This architecture makes APIs exceptionally precise and reliable, though the interchange can experience disruptions if either party modifies the code governing the API's operational behavior without proper coordination.

APIs remain critically important to systems utilizing LLMs, and numerous AI-based systems depend heavily on APIs to function effectively. A model may request data and receive responses through API endpoints as part of its operational workflow.

🤖 MCPs: Detailed Definition and Functionality

MCPs are deployed when LLMs require access to data in scenarios such as querying business data repositories, reading specific file contents, or triggering automated actions. MCPs provide models with a structured methodology to access multiple data sources through a unified interface. An MCP server exposes data in a standardized format according to predetermined rules that determine availability and access permissions.

⚙️ Three Core Capabilities of MCP Servers:

- Tools: Actions the model may initiate, such as creating files, updating databases, or executing search queries.

- Resources: Information sources the model may access and read as contextual data to inform its responses.

- Prompts: Reusable templates that enable users to perform common tasks efficiently without writing detailed prompts for repetitive actions.

⚠️ The critical distinction: MCPs are specifically designed for AI models to be the direct consumers of data. The model intelligently determines which tools or resources it requires based on its analysis of what may be relevant to the user's request.

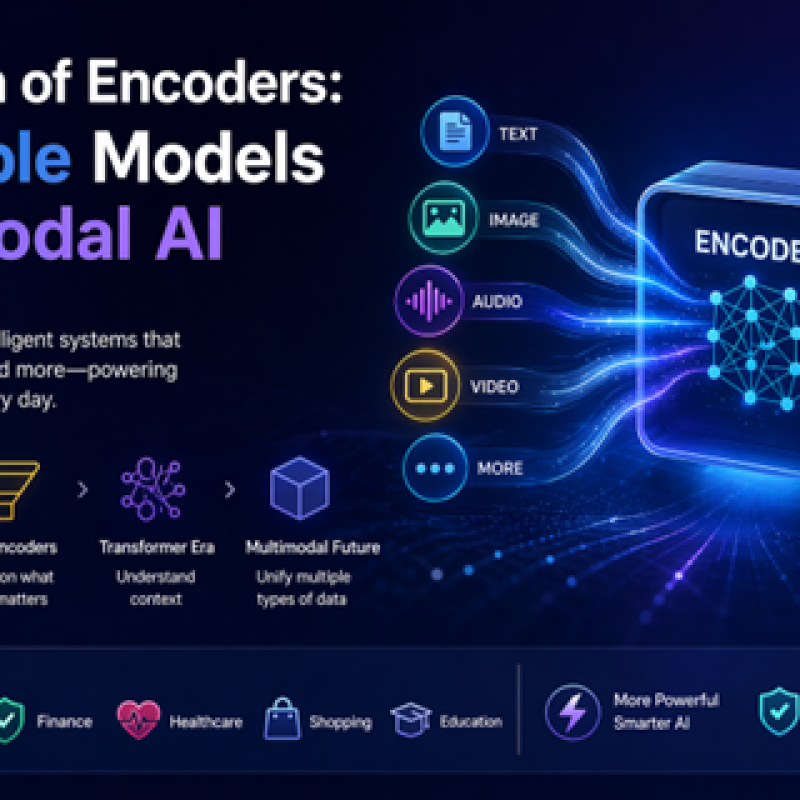

🔄 Why MCPs Are Not Simply API Wrappers

In certain system architectures, APIs remain operational but have an MCP layer positioned between them and the user. An MCP server might invoke an API "behind the scenes" to retrieve data. However, APIs typically return more information by default than a model actually needs to accomplish a specific task.

Since every byte of data must be processed by the LLM, this approach can consume significantly more tokens than necessary. Excessive information increases operational costs and can potentially reduce the accuracy of the model's responses.

💡 Practical Example: An API might return 50 database fields containing comprehensive customer information, but the LLM may only require a single account status entry. Transmitting all 50 fields forces the model to process substantially more data, which doesn't necessarily provide useful contextual value. The LLM cannot determine data relevance until it has expended processing cycles analyzing the information. Furthermore, it may base responses on extraneous data, potentially producing inaccurate or misleading answers.

In an optimized scenario, MCP tools are designed specifically around the tasks a model needs to complete. If a user asks how many customers are subscribed to a particular service or have purchased a specific item, the MCP tool returns only the relevant numerical data rather than complete customer interaction records.

📊 When to Use Each Technology

✅ Use an API when:

One application needs to communicate with another application where there is complete mutual knowledge of required information formats and structures. Common use cases include websites, mobile applications, internal systems, payment platforms, and reporting tools.

✅ Use an MCP when:

The end-consumer of data is an AI model requiring access to undefined or variable information and actions. Examples include AI assistants that answer staff questions with unpredictable input variations, or systems tasked with reviewing internal documents.

🏢 In Enterprise Environments: Both technologies frequently coexist. A customer application displaying specific information (such as account balances) may utilize APIs, while an AI assistant within the same application may employ an MCP server due to the variable nature of queries it generates on behalf of users. Both may access identical underlying data through different interfaces optimized for the requesting system type.

🔐 Security Considerations and Gateway Architecture

A gateway is a device (typically instantiated in software) that fronts both types of service infrastructure. It manages critical functions including authentication, rate limiting, logging, monitoring, and access control.

As MCP adoption grows, organizations must maintain visibility into which AI tools are requesting data from which systems, what data access permissions they possess, and what actions they can perform on that data. A properly configured gateway creates a centralized management point for these essential controls.

⚠️ Important Security Note: Gateways operate at the network layer, arbitrating and recording data movement. However, they do not resolve problems originating from the software layer, including issues with LLMs, deterministic code, or user activity. In cybersecurity terms, they function similarly to firewalls—useful in specific contexts but potentially circumventable, representing a single point of failure, and possibly creating a false sense of comprehensive security.

MCP and API gateways should be understood as perimeter defenses that will not reliably prevent all data-related incidents, particularly those caused by software vulnerabilities, whether in traditional deterministic code or within LLM operations.

(Image source: Pixabay under licence.)

📚 Want to learn more about AI and big data from industry leaders?

Check out AI & Big Data Expo taking place in Amsterdam, California, and London. The comprehensive event is part of TechEx and co-located with other leading technology events. Click here for more information.

AI News is powered by TechForge Media. Explore other upcoming enterprise technology events and webinars here.

Log in

Log in