GitHub Copilot Introduces Per-Token AI Pricing Model for Developers

Starting June 1st, 2026, GitHub Copilot will transition from a flat-rate subscription model to a token-based pricing system, marking a significant shift in how developers are charged for AI-powered coding assistance.

📋 The End of Flat-Rate Subscriptions

The current pricing model, which is being phased out, operates on a straightforward basis. Subscribers receive a predetermined number of 'Premium Requests' based on their subscription tier. Under this system, both complex coding tasks requiring hours of work and simple queries consume a single premium request—regardless of the actual computational resources utilized.

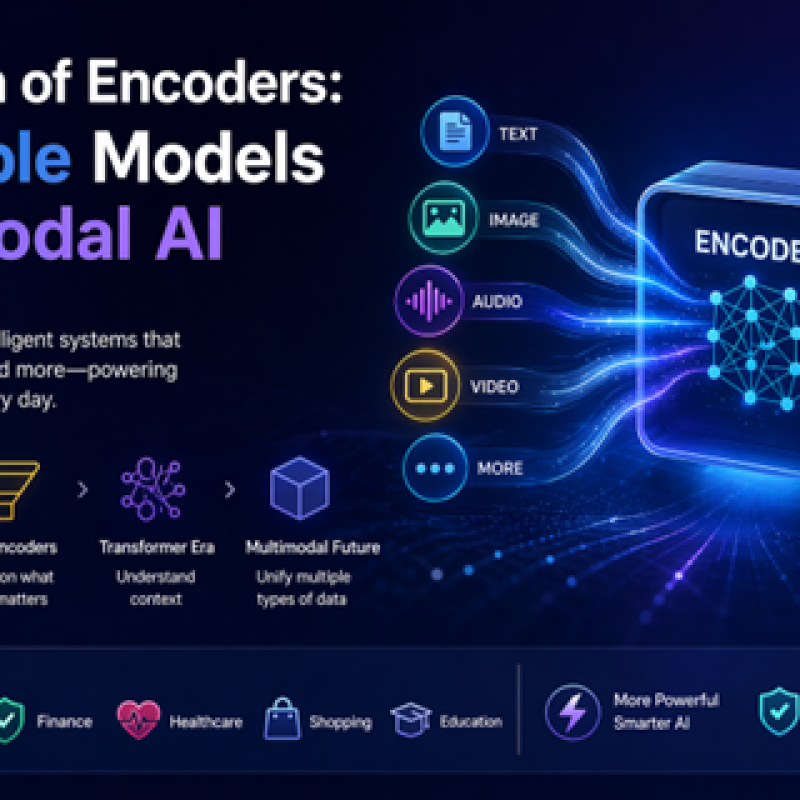

The upcoming change brings GitHub Copilot's pricing structure in line with API charges for large language models, a billing approach already common in enterprise AI services. Under the new system, most requests will be measured by the number of tokens processed—including inputs, outputs, and the computational resources consumed by the underlying LLM.

💡 Understanding Tokens and Their Cost

A token typically represents approximately three-quarters of a word in natural language processing.

To illustrate: a 10,000-word document would translate to approximately 12,000-13,000 tokens. For developers, this means that a codebase containing 10,000 "words"—including expressions, statements, variable names, and functions—would consume 12,000-13,000 tokens from their monthly allocation when analyzed by Copilot for tasks like refactoring or debugging.

Important: Both prompt inputs and Copilot's outputs count toward your token usage.

💰 New Pricing Structure: AI Credits System

While subscription prices remain unchanged, the allocation system is being completely overhauled. Instead of receiving a set number of queries, users will receive 'AI Credits' equivalent to their subscription value:

- Copilot Pro ($10/month): 1,000 AI Credits

- Current conversion rate: 1 AI Credit = $0.01 USD

The number of tokens each credit purchases varies based on several factors:

- ⚙️ Model selection (frontier models cost more than standard ones)

- 📊 Input/output ratio

- 💾 Cache size (contextual data stored in LLM memory)

- 🔧 Features requested

✅ Good news for developers: Simple queries may not require additional credit purchases each month. However, multi-agent queries involving complex, extensive codebases will deplete AI Credits more rapidly.

🎁 Compensatory Benefits

GitHub's pricing restructure includes some user-friendly provisions:

- ✓ Code completions (similar to autocomplete on smartphones) remain free

- ✓ Next Edit suggestions continue at no additional cost

🌐 Industry-Wide Shift to Token-Based Billing

GitHub's pricing transformation reflects a broader industry trend. Anthropic and OpenAI have already transitioned their enterprise customers to token-based billing models. However, Microsoft—GitHub's parent company—has been in a unique position to subsidize Copilot usage through revenues from its profitable software and cloud divisions.

Until June 1st, users have effectively been able to consume between 3-8 times the token value of their monthly subscription cost without penalty—a subsidy that will end with the new pricing model.

⚠️ Implications for Users and Businesses

This change affects Microsoft's strategy to attract new Copilot users. The new model requires both new and existing users to actively monitor their token consumption per query—a metric that was previously abstracted by flat monthly subscriptions. While this approach may make economic sense for Microsoft, it could discourage the experimentation and testing that new users typically need during onboarding.

Real-world example: Uber's CTO revealed that the company had exhausted its entire 2026 AI budget within the first year, noting that 11% of Uber's code updates are now AI-generated. Uber primarily utilizes Anthropic's Claude coding agents.

For organizations deploying AI coding agents across development teams, the cost implications are substantial. Beyond IT departments, companies implementing AI automation should recognize that complex tasks—potentially involving autonomous agentic LLMs running for extended periods—may soon face similar per-token billing.

Bottom line: The efficiency gains delivered by AI in the workforce must be carefully weighed against potential increases in AI vendor costs.

Image source: Pixabay under licence.

Want to learn more about AI and big data from industry leaders?

Check out AI & Big Data Expo taking place in Amsterdam, California, and London. The comprehensive event is part of TechEx and co-located with other leading technology events. Click here for more information.

AI News is powered by TechForge Media. Explore other upcoming enterprise technology events and webinars here.

Log in

Log in