How AI Encoders Evolved From Simple Models to Multimodal Systems

When people discuss artificial intelligence, they typically focus on its outputs: human-like text generation, stunning visual creations, or remarkably accurate recommendation systems. However, what often goes unnoticed is how AI comprehends information in the first place. That foundational understanding begins with encoders—sophisticated translators that convert complex, real-world data into structured formats that machines can process and interpret.

Over the years, encoders have quietly transformed from basic data converters into advanced systems capable of processing multiple information types simultaneously. This evolution represents decades of incremental progress, practical problem-solving, and innovative breakthroughs driven by real-world application needs.

The Beginning: Encoding as a Technical Necessity

In machine learning's early stages, encoding served primarily as a technical requirement rather than an intelligent process. Developers manually determined data representation methods. When systems needed to interpret categories like "small," "medium," and "large," those labels required conversion into numerical values.

While functional, this approach had significant limitations. Systems didn't genuinely understand context—they merely processed numbers. For instance, early e-commerce platforms might recommend products based on rudimentary categorization, but couldn't grasp nuanced relationships between items. A customer purchasing running shoes wouldn't automatically see fitness trackers or hydration equipment unless those connections were explicitly programmed.

Early encoders managed data conversion, not semantic understanding.

Machine Learning: From Instructions to Pattern Recognition

The landscape shifted dramatically with neural network integration. Instead of depending entirely on human-defined rules, systems began identifying patterns directly from training data. Encoders evolved from simple converters into intelligent learning mechanisms.

Image recognition provides a practical illustration. Rather than programming specific features defining a cat's physical characteristics—ears, whiskers, tail—developers could train systems on thousands of images. The encoder would autonomously discover distinguishing patterns, making AI significantly more adaptable and accurate.

This principle extended to language processing. Words transformed from static symbols into vector-based mathematical representations capturing semantic meaning and contextual relationships. This advancement explains why modern search engines understand that "cheap flights" and "budget airfare" convey similar intent despite different wording.

Autoencoders: Identifying Essential Information

A significant advancement emerged with autoencoders—models designed around a deceptively simple concept: compress data, then reconstruct it. Successful execution required encoders to identify truly important information while filtering out noise.

This methodology proved invaluable across industries. In financial services, autoencoders detect fraudulent transactions by learning normal behavioral patterns. When unusual activity occurs—such as unexpected high-value purchases in foreign countries—the system flags anomalies based on learned patterns rather than predefined rules.

💡 Practical Application: Photo storage platforms utilize encoders to reduce file sizes while preserving visual quality, enabling faster loading times without noticeable compression artifacts.

The Transformer Revolution: Context-Aware Processing

The most transformative development in encoder evolution came with transformer architectures. Unlike previous models, transformers process information holistically rather than sequentially, determining contextual relevance across entire input sequences.

This capability proves especially critical in natural language understanding. Consider the sentence: "She saw the man with the telescope." Who possesses the telescope? Earlier models struggled with such ambiguity. Transformer-based encoders analyze complete sentence structure to make informed interpretations.

This breakthrough powers everyday tools: conversational AI assistants, voice dictation systems, and real-time translation services all rely on transformer encoders working seamlessly in the background.

Encoders in Daily Technology Interactions

Today, encoders permeate digital experiences, though most users remain unaware of their presence. They fundamentally shape how we interact with technology:

🎬 Streaming Platforms: Encoders analyze viewing habits to understand preferences. If you watch crime documentaries and psychological thrillers, the system doesn't simply categorize interests—it learns behavioral patterns to suggest increasingly relevant content over time.

🗺️ Navigation Applications: Encoders process real-time traffic data, road conditions, and collective user behavior to suggest optimal routes, often anticipating congestion before it becomes apparent.

🏥 Healthcare Systems: Medical image analysis benefits from encoders that assist diagnosticians by highlighting areas requiring attention, supporting faster and more accurate clinical decisions without replacing professional judgment.

Multimodal Encoders: Cross-Domain Understanding

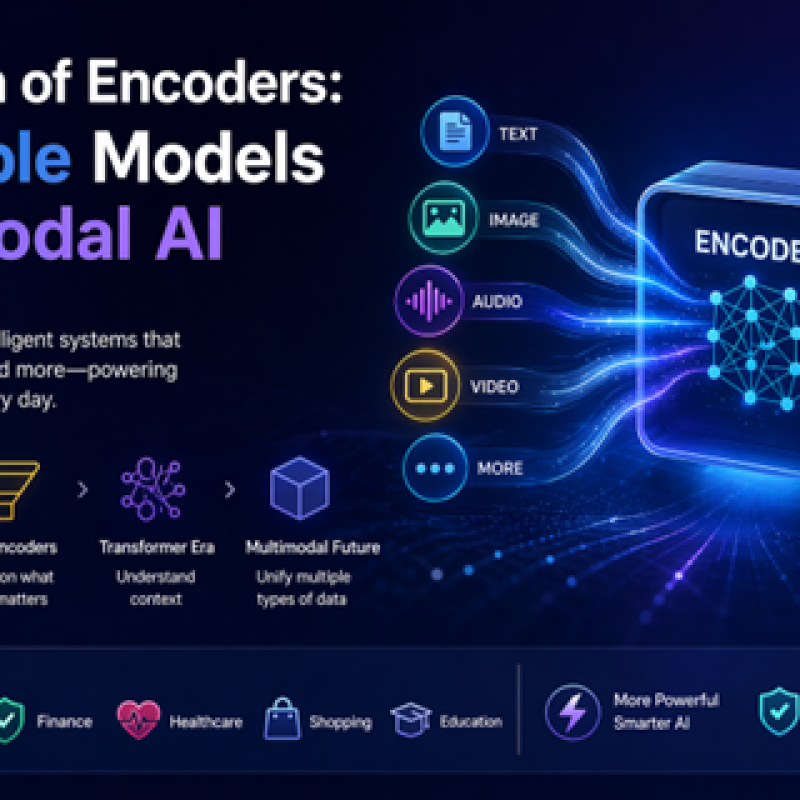

The latest encoder evolution represents perhaps the most significant advancement: multimodal processing capability. These systems simultaneously handle text, images, audio, and other data types, creating more natural user experiences.

Imagine photographing an unfamiliar plant and asking your device for care instructions. A multimodal encoder analyzes the visual information, interprets your question, and delivers actionable guidance within seconds.

🛍️ E-commerce Enhancement: Rather than typing product descriptions, users can upload images of desired items. The system identifies similar products by combining visual recognition with contextual understanding.

This ability to synthesize different information types pushes AI closer to human-like perception and reasoning.

Challenges Accompanying Progress

As encoders grow more sophisticated, they demand greater resources. Advanced models require substantial computational power and energy consumption, raising important questions about environmental sustainability and equitable access to AI technology.

⚠️ Bias Concerns: Since encoders learn from training data, they can perpetuate existing biases. If trained on discriminatory hiring data, systems may unintentionally favor certain demographic groups. Addressing this requires careful dataset curation and continuous monitoring.

🔒 Privacy Considerations: Encoders frequently process sensitive personal information, making data protection paramount. Balancing innovation with ethical responsibility remains an ongoing challenge for developers and organizations.

Future Directions and Developments

The encoder future focuses on refinement rather than revolutionary breakthroughs. Researchers are developing faster, more efficient, and resource-conscious models that could democratize advanced AI capabilities for smaller businesses and independent developers.

Personalization advances: Future encoders may adapt in real-time to individual users, delivering highly customized experiences. Educational applications could adjust content delivery based on each student's learning style, maximizing instructional effectiveness.

Multimodal systems will continue improving, creating more seamless data integration across formats. This progression promises increasingly intuitive interfaces where technology interaction feels as natural as human conversation.

A Quiet Revolution With Profound Impact

Encoders may not represent AI's most visible component, but they constitute one of its most critical elements. Their evolution from simple data converters to intelligent, multimodal processing systems has fundamentally reshaped machine capabilities.

What makes this technological journey particularly compelling is its alignment with practical needs. Each advancement addressed real-world challenges: understanding natural language, recognizing visual patterns, detecting fraudulent activity, and enhancing everyday digital experiences.

As artificial intelligence continues expanding, encoders will remain at its foundation—quietly transforming raw information into meaningful insights. Though they operate behind the scenes, their impact on modern technology is undeniable and far-reaching.

Log in

Log in