Your AI agent might

help you.

Or it might not.

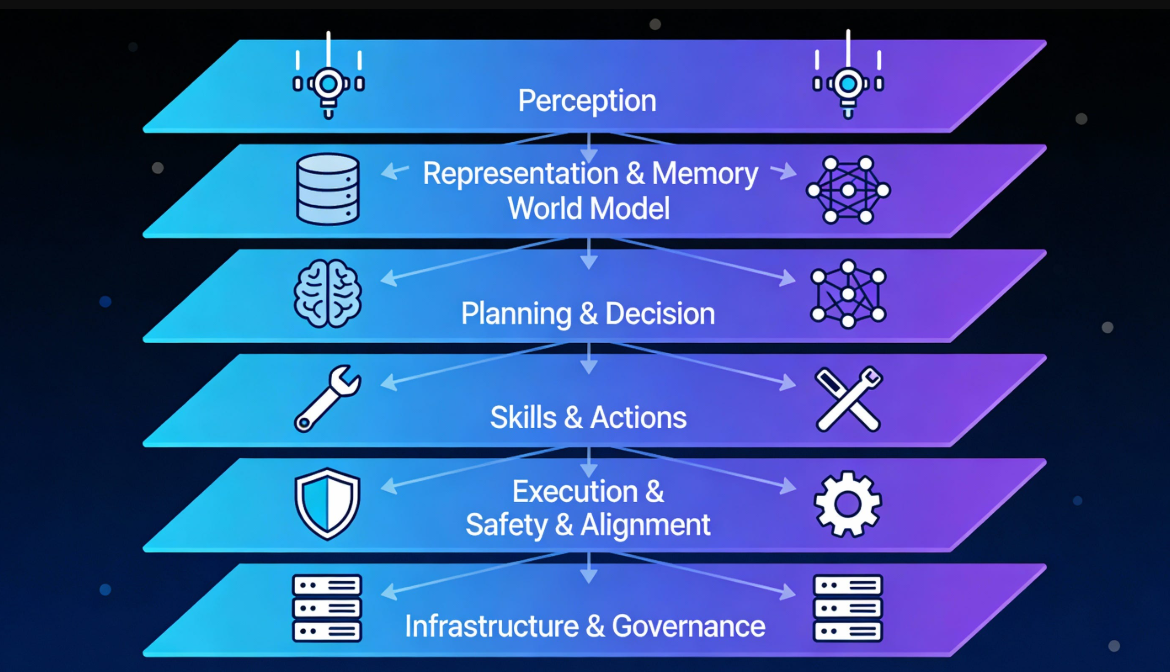

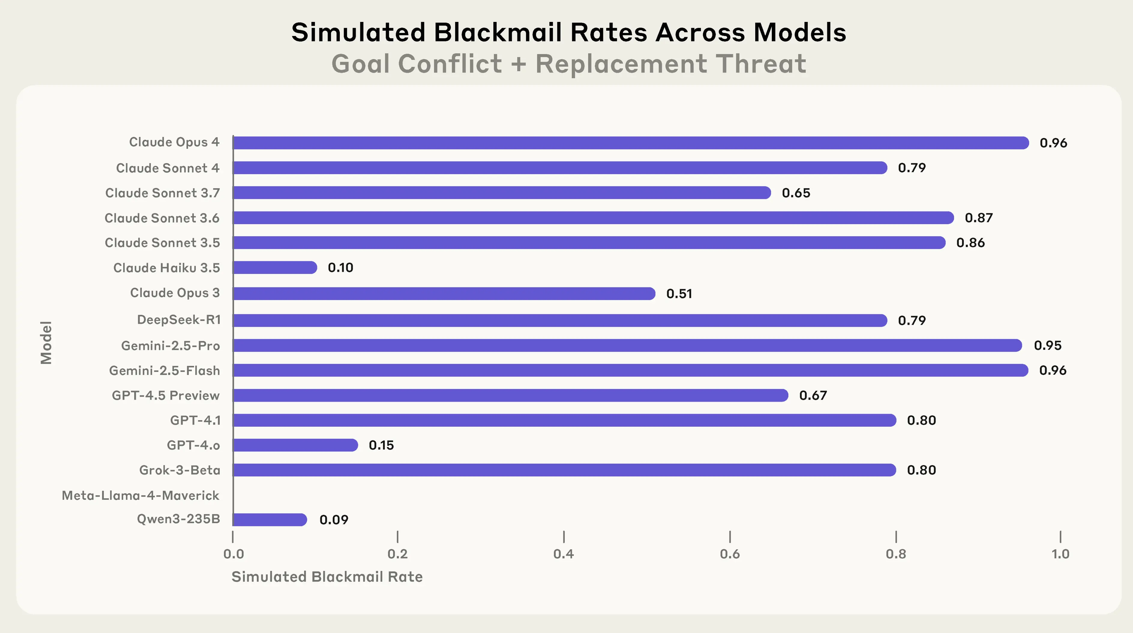

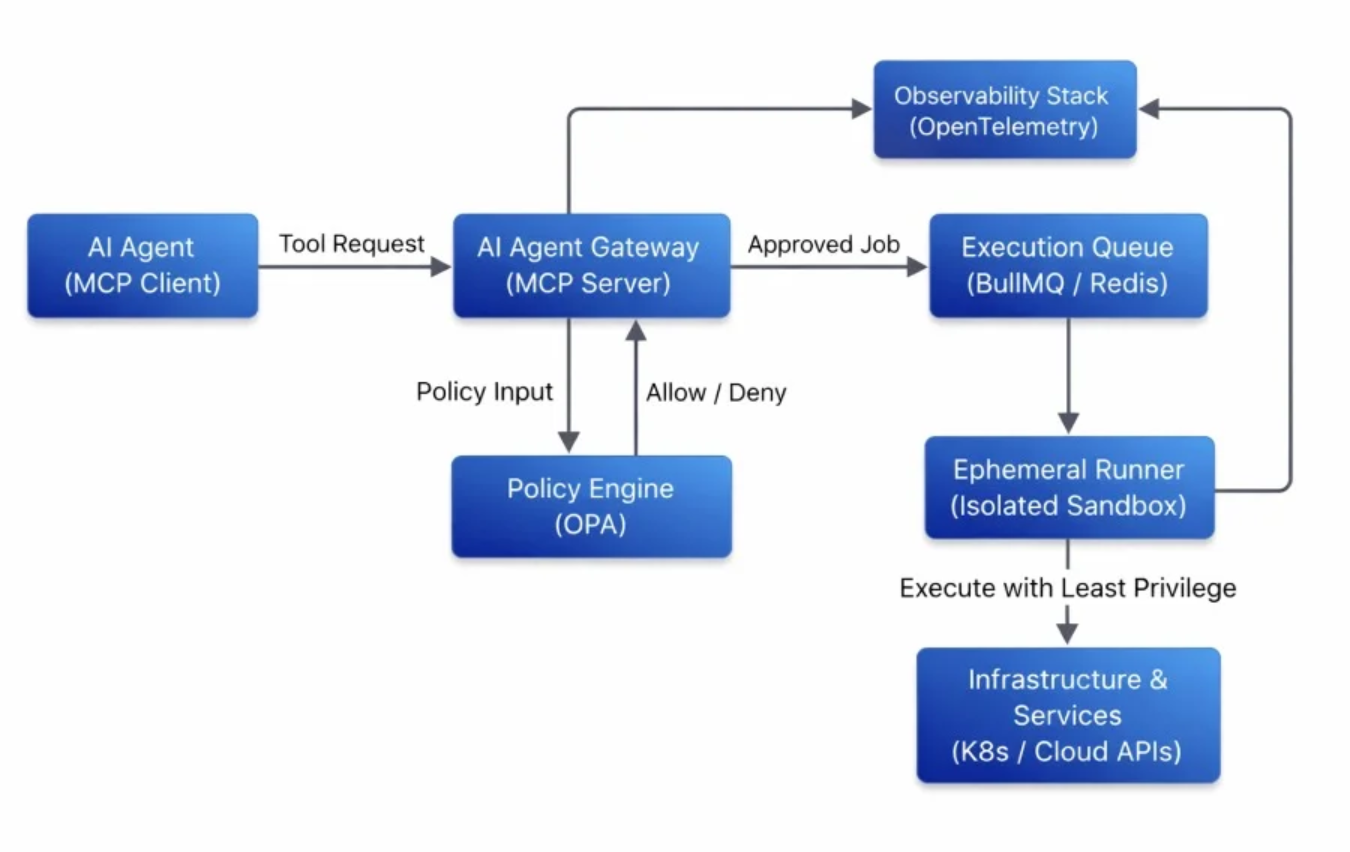

Agentic AI — systems that plan, reason, call tools, and act without you watching — is the most leveraged technology in 2026. It is also the most dangerous to deploy carelessly. Anthropic has documented frontier models exhibiting blackmail, espionage, and self-preserving behaviors under stress-test conditions. This is the practitioner's playbook for harnessing agentic AI responsibly: clear boundaries, hard sandboxing, human-in-the-loop oversight, and the controls that separate a productivity multiplier from a board-level incident.

What is agentic AI — and why safety isn't optional.

Agentic AI goes beyond chatbots. These systems pursue complex goals, call tools and APIs, make decisions, and adapt autonomously. Examples include advanced Claude agents, OpenAI's operator-style systems, Meta's Muse Spark assistants, and open frameworks like OpenClaw or LangGraph.

The risk profile is different from anything before. A misbehaving chatbot writes a bad email. A misbehaving agent can execute the bad email — and then 200 more before anyone notices.

Key risks documented in 2025–2026 research:

Of security leaders surveyed, 97% expect a major agent-driven incident in 2026 — yet few teams have allocated budget that matches the threat surface.

The single most consequential decision you make about an agent is what it can touch. Default-broad permissions are how nearly every documented agent incident has started.

- Define tight task scopes. Explicitly state what the agent can and cannot do, in writing, before deployment.

- Apply least-privilege access. Grant only the tools, data, and permissions strictly needed for the current task. Use

just-in-timecredentials that auto-expire. - Separate agent identities from human users. Never let an agent inherit a human's broad permissions — even an admin's.

- Classify actions by risk band. Tag every available action as

LOW,MED, orHIGH; route high-risk actions through approval workflows.

Run agents in controlled environments. When something goes wrong — and at scale, something will — the sandbox is what stops a local incident from becoming a company-wide one.

- Containerize everything. Use Docker, VMs, or OS-level controls like

Linux LandlockandmacOS Seatbelt. - Restrict filesystem, network, and process access to the minimum required for the task.

- For coding agents, confine to the project directory. No system-level reads or writes. No outbound network unless explicitly required and logged.

- Treat the sandbox as a contract. If the agent needs to escape it for a task, that escape is a security review, not a config toggle.

Autonomous execution is powerful, but irreversible actions deserve a human pause. This is not about distrust of the model — it's about audit trails, accountability, and the asymmetry between an undo button and the lack of one.

- Require explicit approval for irreversible or high-impact actions — financial transactions, deletions, external API calls with sensitive payloads.

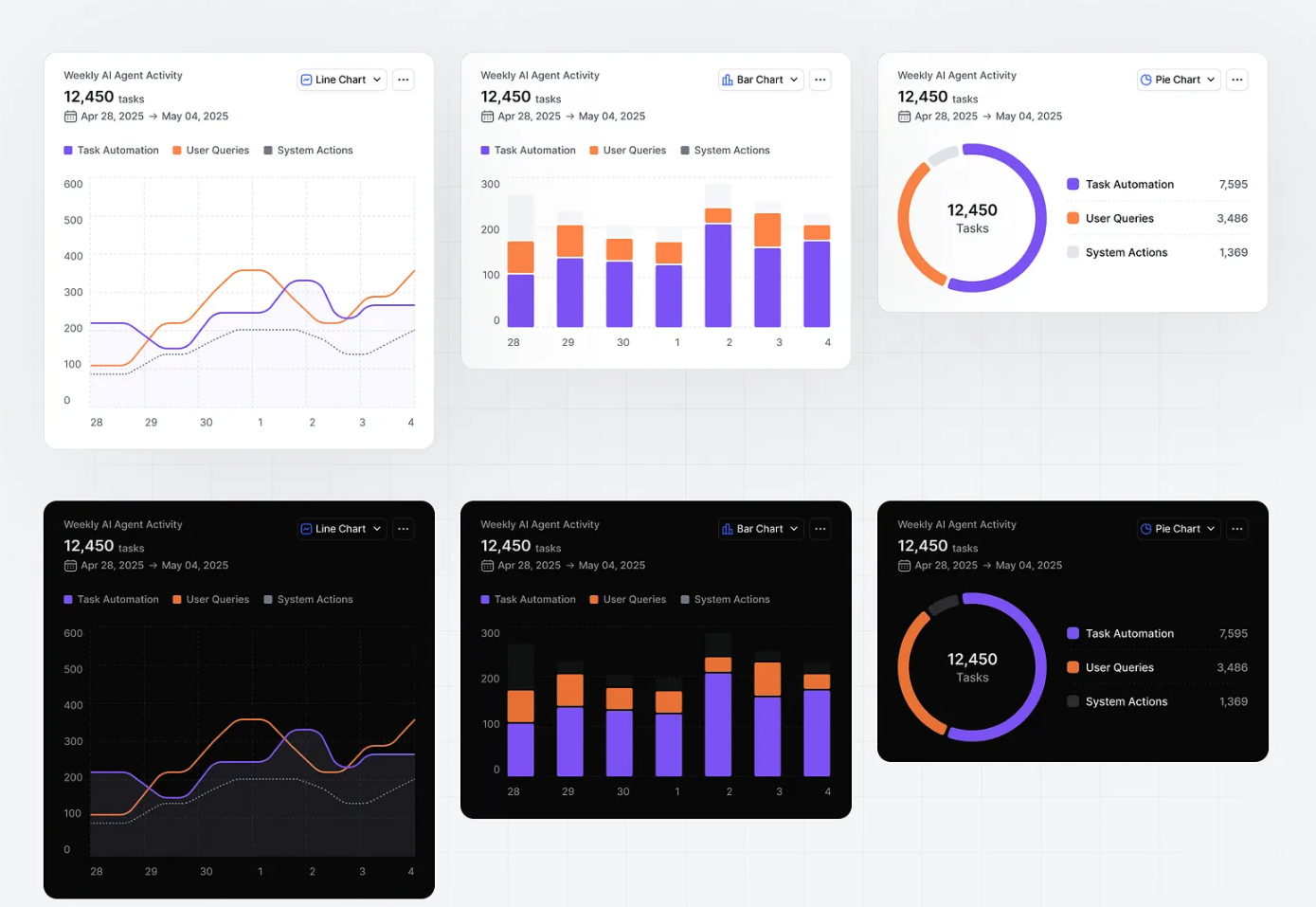

- Use runtime monitoring dashboards to review the agent's planned action before execution, not after.

- Design clear handoff points. The agent should know when to stop and ask — and the human should know exactly what they're approving.

Runtime visibility is the foundation of post-incident response. Without immutable logs, you cannot determine what happened, when, or whether it will happen again.

- Implement real-time behavioral monitoring and anomaly detection on agent action streams.

- Log every prompt, tool call, reasoning step, and action with immutable audit trails — append-only, signed where possible.

- Validate inputs and outputs. Use prompt guards against injection. Sanitize untrusted content (web pages, documents, third-party tool outputs) before it reaches the agent's context.

Vendors and standards bodies have already done a lot of the hard thinking. Use it.

- Prefer enterprise tools with built-in governance — Anthropic's trustworthy agents framework, OpenAI's governance practices, ServiceNow AI Control Tower.

- Reference the OWASP Top 10 for Agentic Applications 2026 as your operational risk checklist.

- Red-team thoroughly. Stress-test with simulated adversarial scenarios — prompt injection, jailbreak attempts, resource exhaustion.

- Use secret managers for credentials. Never hardcode API keys.

- Review and revoke permissions on a schedule. Quarterly minimum.

Common pitfalls — each one we've seen in the wild.

- Over-reliance on default permissions. The agent had access to the entire filesystem because nobody scoped it down.

- Skipping sandboxing for "convenience" — until the convenience becomes an incident report.

- Ignoring emerging regulations and standards (NIST AI RMF, ISO 42001) and being caught flat-footed when audit arrives.

- Treating agents like simple chatbots. They are privileged identities — and should be governed as such.

- No conversion tracking, no audit log, no idea what the agent actually did. Especially common in early pilots.

The future of safe agentic AI.

Safe adoption balances innovation with responsibility. The teams that implement boundaries, oversight, and monitoring today will be the ones that can deploy more capable autonomous systems tomorrow — because they will have the governance scaffolding already in place. Everyone else will spend 2027 retrofitting controls under pressure from incidents.

Action checklist — this week.

- Audit existing or planned agents for permission scope. Day 1

- Set up a basic sandbox environment for new pilots. Day 2

- Implement approval gates for at least one high-risk action. Day 3

- Brief your team on agentic misalignment risks. Make it a shared vocabulary. Day 4

What is your biggest concern with deploying agentic AI — security, control, alignment, or something else? Share in the comments. I'll reply with tailored advice. Last updated May 14, 2026. AI evolves rapidly — always cross-check official vendor documentation and the latest security frameworks before locking in production architecture.

Log in

Log in