Nvidia CEO Jensen Huang’s Explosive Dwarkesh Podcast: Supply Chain Moat, TPU Competition, China Chip Sales & Why US-China AI Dialogue Is Now Non-Negotiable (April 2026)

In just 24 hours after its release on April 15, 2026, Nvidia CEO Jensen Huang’s 1-hour-43-minute conversation with Dwarkesh Patel racked up hundreds of thousands of views across YouTube, X, and Spotify. The episode—titled “Jensen Huang – TPU competition, why we should sell chips to China, & Nvidia’s supply chain moat”—is being called one of the most candid and strategically revealing interviews Huang has ever given.

As the operator of www.ai.cc with over a decade of experience curating AI tools, tracking infrastructure trends, and advising development teams on scaling compute, I’ve watched every major Nvidia earnings call and GTC keynote. This podcast stands out because Huang doesn’t just defend Nvidia’s dominance—he reframes the entire AI hardware war around supply chain scarcity, total cost of ownership (TCO), and geopolitical reality. And in a week already dominated by Anthropic’s Mythos Preview, his comments on US-China AI cooperation landed like a thunderclap.

Nvidia’s Real Moat Isn’t CUDA—It’s the Bottlenecked Global Supply Chain

Huang’s central thesis is blunt: the scarcest resource in AI isn’t talent or even algorithms—it’s advanced semiconductor manufacturing capacity. Nvidia has locked up the world’s most constrained supply chains for high-bandwidth memory (HBM), advanced packaging, and leading-edge wafers. “If our next several years are a trillion dollars in scale, we have the supply chain to do it,” he stated, pointing to Nvidia’s multi-year, multi-billion-dollar commitments with TSMC, Samsung, and ASML.

This isn’t marketing spin. In an era where every hyperscaler is racing to build million-GPU clusters, Huang argues that owning the physical “switchyard” for AI compute gives Nvidia unmatched velocity and resilience. Competitors can design ASICs, but they still need the same rare manufacturing slots—and Nvidia has them booked years ahead.

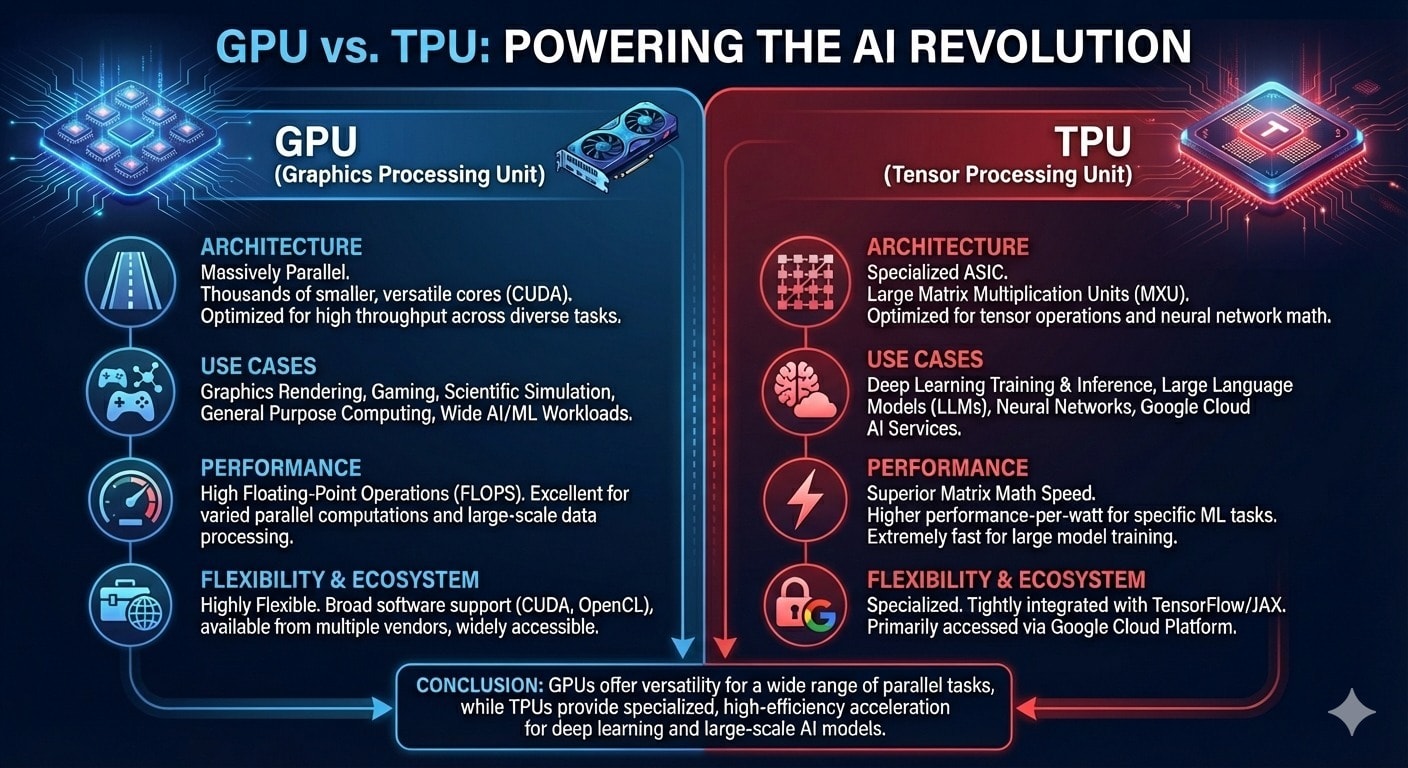

TPU Competition: Jensen Dares Google and Amazon to Benchmark

One of the most anticipated segments (starting at ~16:25) tackles the elephant in the room: Google TPUs and Amazon Trainium. Claude and Gemini have been trained on TPUs, and hyperscalers love touting lower inference costs. Huang’s response? Bring it on.

He repeatedly challenged Google and Amazon to submit results to public benchmarks like InferenceMax and MLPerf. “Nobody can show me a platform with better performance per TCO. Not one company.” Huang emphasized that while TPUs excel at narrow, highly optimized workloads inside Google’s own stack, Nvidia’s full software ecosystem (CUDA, cuDNN, Triton) delivers superior end-to-end value across training, inference, and agentic workflows.

The China Question: Why Huang Says Selling Chips Is Actually Good for US Security

Perhaps the most politically charged portion begins at ~57:36. Huang makes an unapologetic case for relaxing export controls on AI chips to China. He argues that isolating 40% of the global tech market weakens American innovation and security, not strengthens it. Marginal sales to China, he contends, keep US companies at the frontier while China remains several nodes behind (still largely stuck at 7nm while Nvidia ships 3nm/2nm).

This stance has drawn sharp pushback—especially from Anthropic circles—but Huang frames it as pragmatic realism rather than idealism.

Mythos Moment: Why US-China AI Research Dialogue Is “No Longer Optional”

Huang explicitly tied his comments to Anthropic’s just-released Claude Mythos Preview—the ultra-capable cybersecurity model that Anthropic chose not to open-source due to its offensive potential. He called Mythos a “turning point” that makes formal US-China researcher dialogue essential. “We want the United States to win, but I think having a dialogue and having a research dialogue is probably the safest thing to do.”

In a week where banks, regulators, and even Nvidia’s own customers are scrambling to assess Mythos-level cyber risks, Huang’s message resonates: frontier AI is moving faster than export controls or treaties. Shared understanding between the two largest AI powers may be the only realistic guardrail.

The Parallel Vision: Meta’s “Neural Computers” and the Post-OS Future

While Nvidia doubles down on accelerated hardware infrastructure, Meta’s April 2026 “Neural Computers” paper (with KAUST) proposes the opposite extreme: fold computation, memory, and I/O entirely into the neural network itself. Prototypes built on video diffusion models simulate full terminal sessions and desktop GUIs without any traditional operating system underneath.

This “pure neural” paradigm—where the model is the computer—represents the long-term counterpoint to Nvidia’s hardware-heavy stack. Huang didn’t address it directly, but the contrast is striking: one side is racing to build bigger, more efficient silicon; the other is trying to make silicon disappear inside learned latent states.

What This Means for Developers, Teams, and the AI Ecosystem

For AI practitioners and engineering leaders:

- Infrastructure bets now hinge more on TCO and ecosystem maturity than raw FLOPS.

- Geopolitical risk is now table stakes in every long-term compute roadmap.

- Hybrid futures are likely—Nvidia GPUs for flexibility, custom silicon for hyperscale efficiency, and neural-native runtimes for entirely new interaction models.

The podcast has already sparked thousands of X threads, Reddit deep-dives, and investor notes. It’s not hype; it’s a masterclass in how the AI hardware layer thinks about the next 3–5 years.

As someone who’s spent the last decade helping teams navigate exactly these infrastructure shifts at www.ai.cc, I can say this interview crystallizes why staying informed at the hardware + geopolitics + model intersection has never been more valuable. Whether you’re training frontier models, building agents, or just trying to keep inference costs under control, understanding Nvidia’s moat, the TPU challenge, and the emerging neural-computer vision will shape your roadmap.

If you want the latest breakdowns, hands-on tool roundups, infrastructure benchmarks, and practical guides that actually move the needle for your team, dive deeper at aicc—your daily hub for real-world AI productivity at www.ai.cc. We’ll keep tracking every twist in this story as it unfolds.

It’s about who controls the future of scarcity itself.

Log in

Log in